Submission ID: fc04fb6c

Topology Learning Network (TLNet)

Processed: 24-03-29. Download link: fc04fb6c422ab9e486a09e2079a1fc74-rootsift-upright-2k.json

This page ranks the submission against all others using the same number of keypoints, regardless of descriptor size. Please hover over table headers for descriptions on metrics and full scene names.

- Phototourism dataset: Stereo track / Multiview track

- Prague Parks dataset: Stereo track / Multiview track

- Google Urban dataset: Stereo track / Multiview track

Metadata

- Authors: szw (contact)

- Keypoint: sift-lowth

- Descriptor: rootsift-upright (128 float32: 512 bytes)

- Number of features: 2048

- Summary: RootSIFT upright with 2048 features, NN matching, TLNet as outlier filter, cv2-usacmagsac-f.

- Paper: N/A

- Website: N/A

- Processing date: 24-03-29

Phototourism dataset / Stereo track

mAA at 10 degrees: 0.43410 (±0.00000 over 1 run(s) / ±0.16560 over 9 scenes)

Rank (per category): 61 (of 87)

| Scene | Features | Matches (raw) |

Matches (final) |

Rep. @ 3 px. | MS @ 3 px. | mAA(5o) | mAA(10o) |

| BM | 2048.0 | 2048.0 | 206.1 | 0.464 Rank: 44/87 |

0.886 Rank: 53/87 |

0.05931 (±0.00000) Rank: 81/87 |

0.12138 (±0.00000) Rank: 80/87 |

| FCS | 2048.0 | 2048.0 | 143.4 | 0.358 Rank: 72/87 |

0.834 Rank: 65/87 |

0.48053 (±0.00000) Rank: 62/87 |

0.60421 (±0.00000) Rank: 64/87 |

| LMS | 2048.0 | 2048.0 | 165.5 | 0.438 Rank: 31/87 |

0.718 Rank: 6/87 |

0.51538 (±0.00000) Rank: 51/87 |

0.64913 (±0.00000) Rank: 49/87 |

| LB | 2048.0 | 2048.0 | 81.1 | 0.405 Rank: 28/87 |

0.767 Rank: 6/87 |

0.42857 (±0.00000) Rank: 81/87 |

0.52177 (±0.00000) Rank: 50/87 |

| MC | 2048.0 | 2048.0 | 99.8 | 0.391 Rank: 67/87 |

0.923 Rank: 18/87 |

0.33893 (±0.00000) Rank: 81/87 |

0.46641 (±0.00000) Rank: 46/87 |

| MR | 2045.9 | 2046.4 | 128.1 | 0.439 Rank: 14/87 |

0.876 Rank: 64/87 |

0.22160 (±0.00000) Rank: 54/87 |

0.34383 (±0.00000) Rank: 43/87 |

| PSM | 2048.0 | 2048.0 | 102.4 | 0.365 Rank: 21/87 |

0.453 Rank: 71/87 |

0.14286 (±0.00000) Rank: 50/87 |

0.23077 (±0.00000) Rank: 54/87 |

| SF | 2048.0 | 2048.0 | 131.9 | 0.390 Rank: 17/87 |

0.801 Rank: 23/87 |

0.41977 (±0.00000) Rank: 54/87 |

0.55715 (±0.00000) Rank: 51/87 |

| SPC | 2048.0 | 2048.0 | 100.9 | 0.401 Rank: 28/87 |

0.713 Rank: 72/87 |

0.27851 (±0.00000) Rank: 72/87 |

0.41226 (±0.00000) Rank: 71/87 |

| Avg | 2047.8 | 2047.8 | 128.8 | 0.406 Rank: 45/87 |

0.774 Rank: 60/87 |

0.32061 (±0.00000) Rank: 60/87 |

0.43410 (±0.00000) Rank: 61/87 |

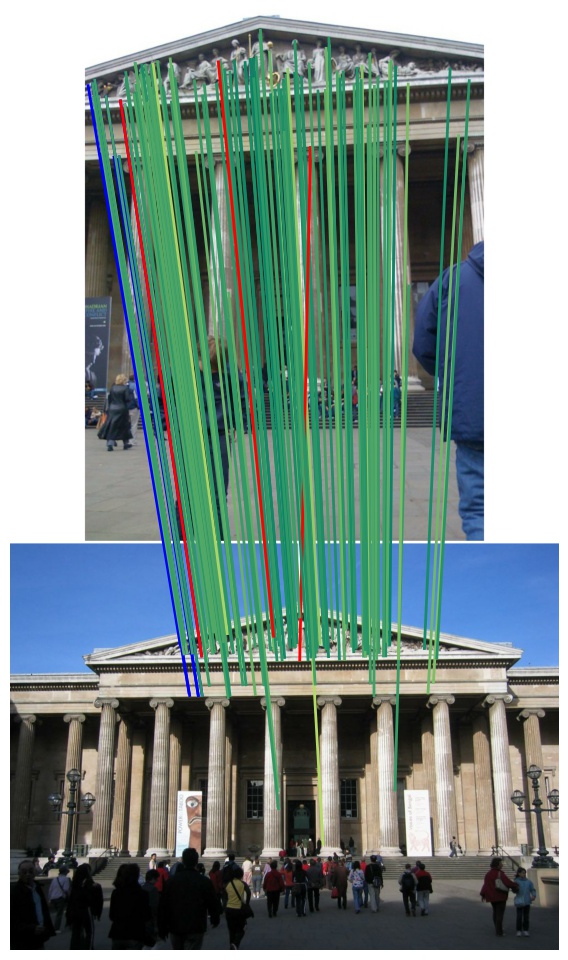

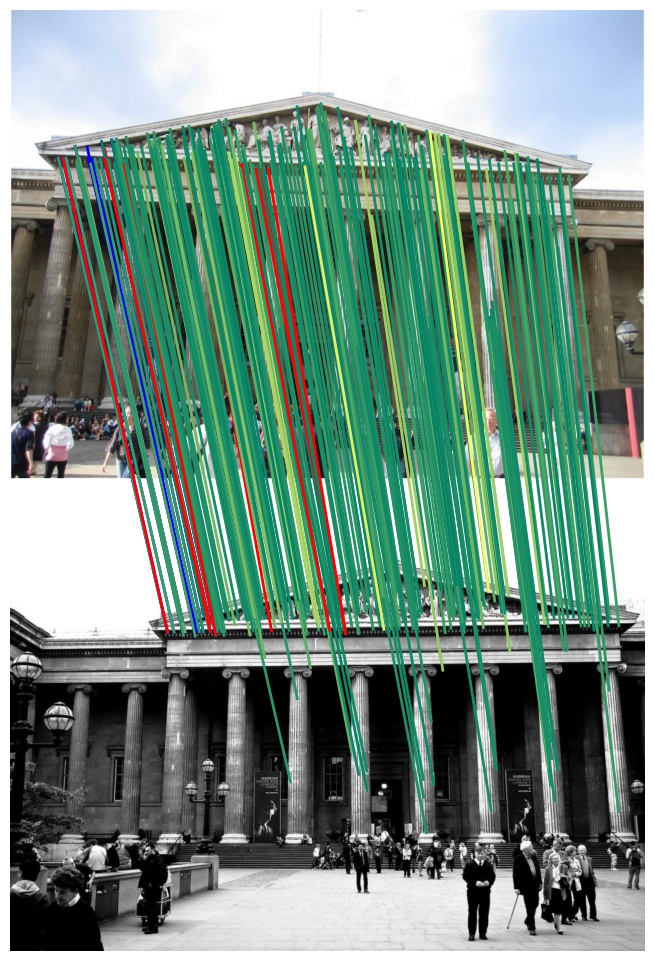

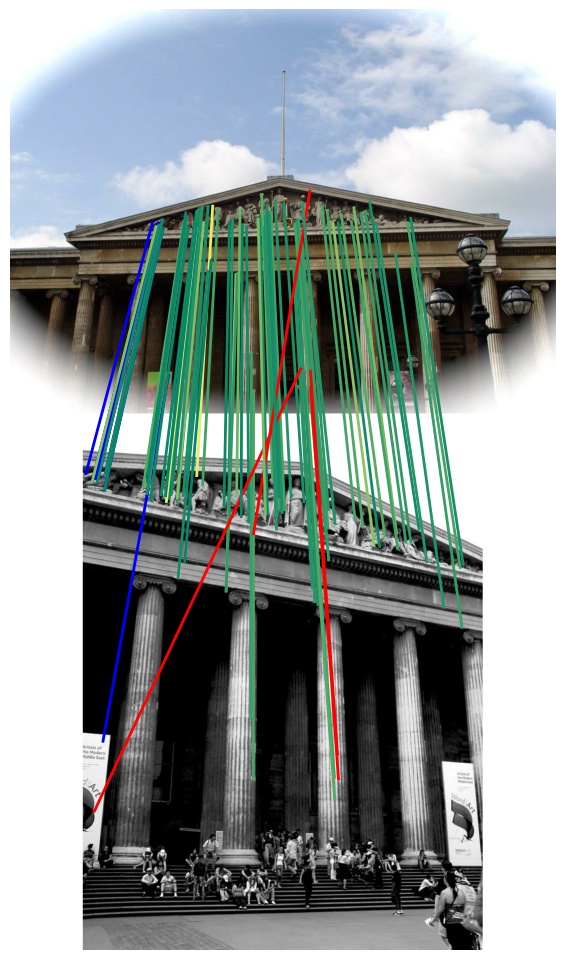

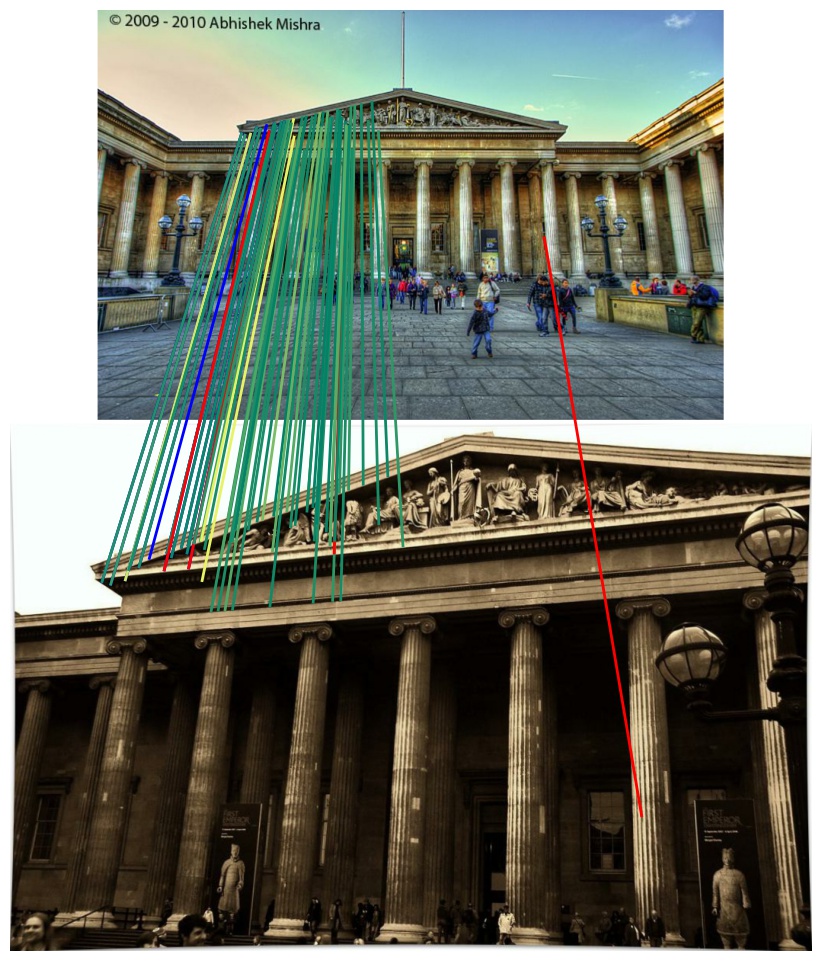

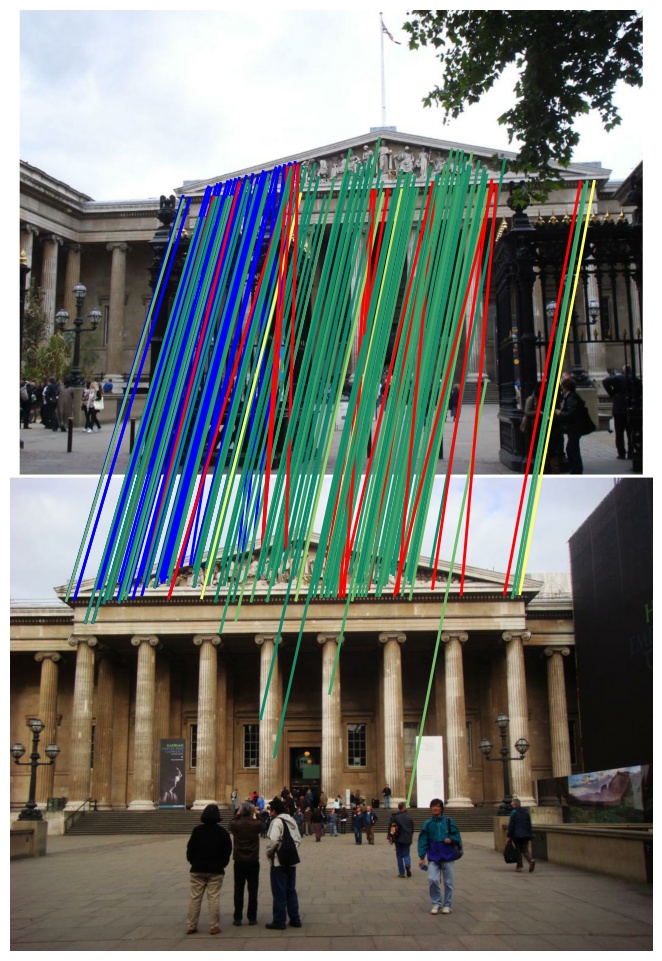

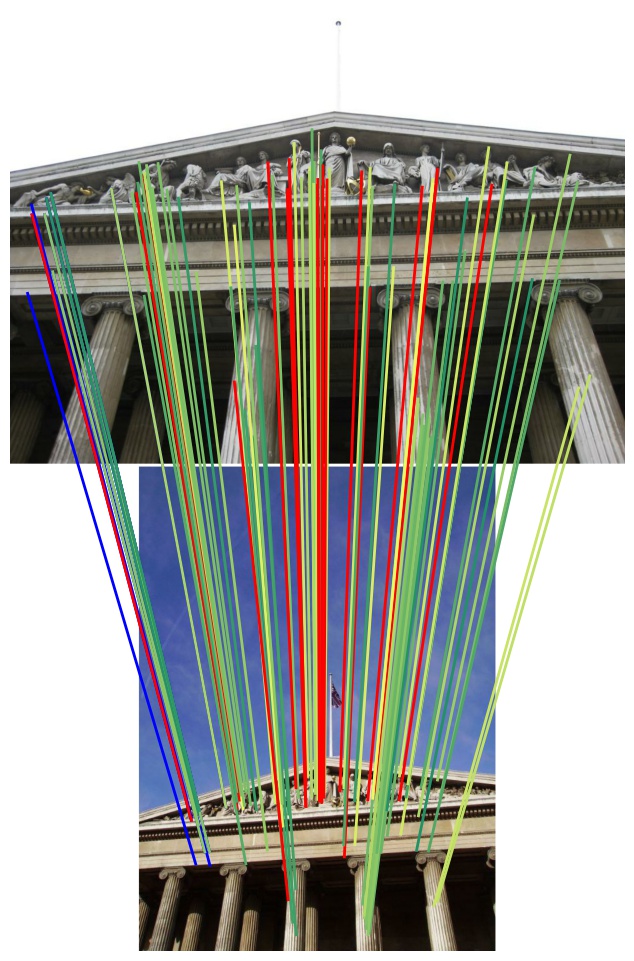

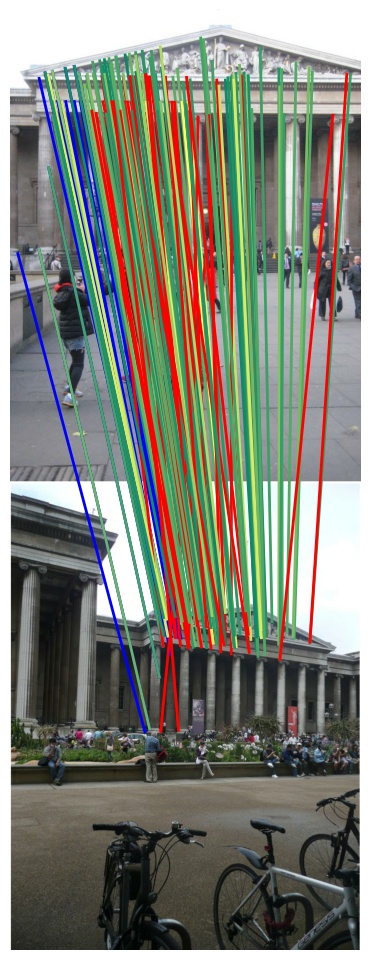

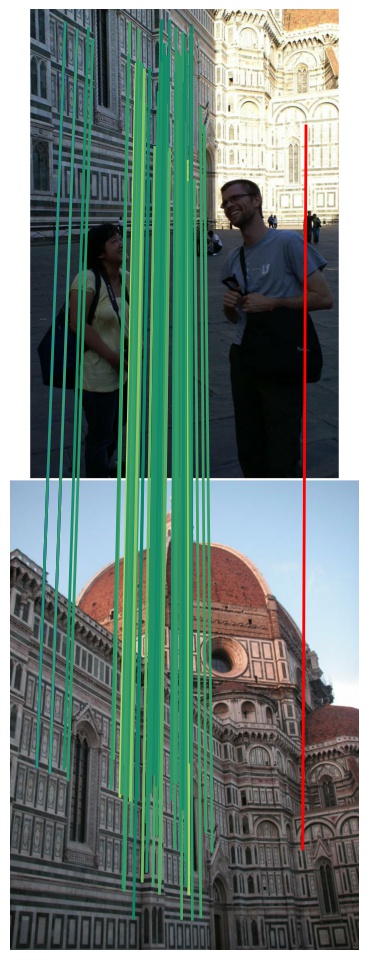

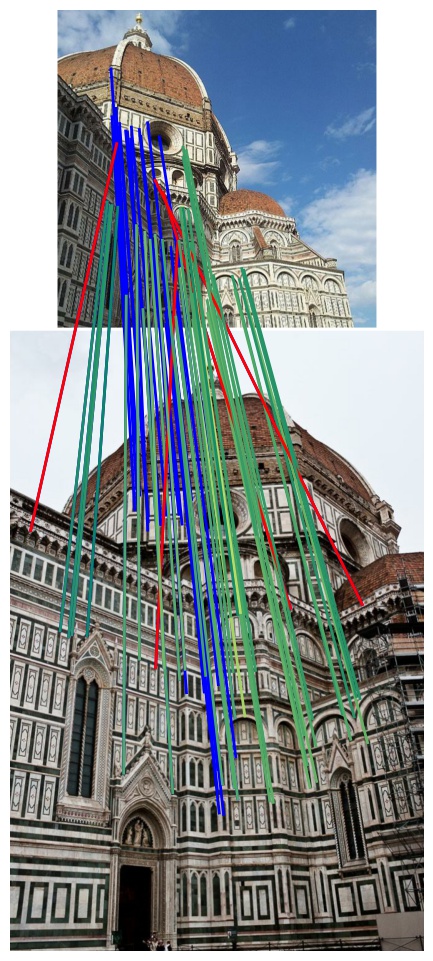

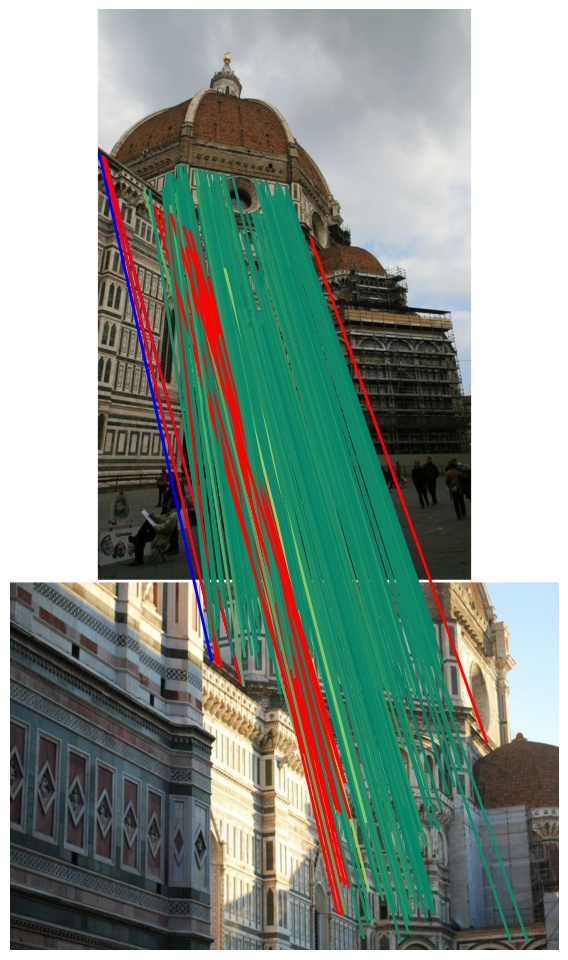

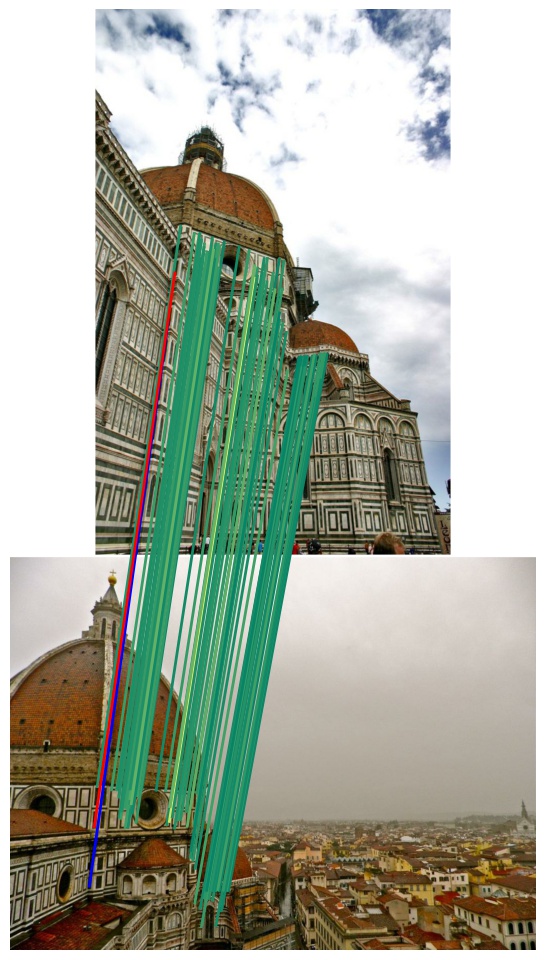

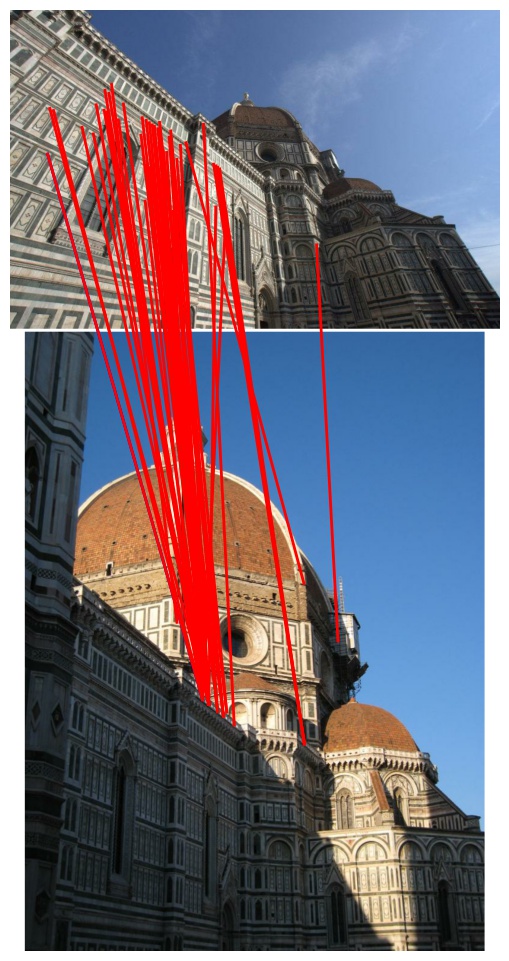

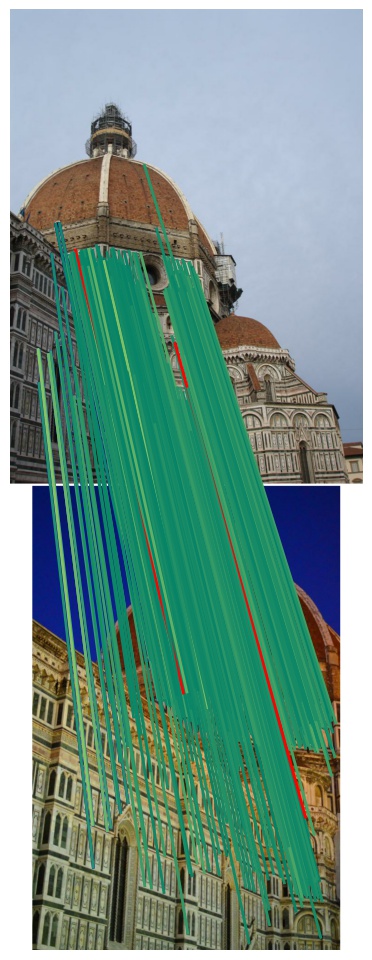

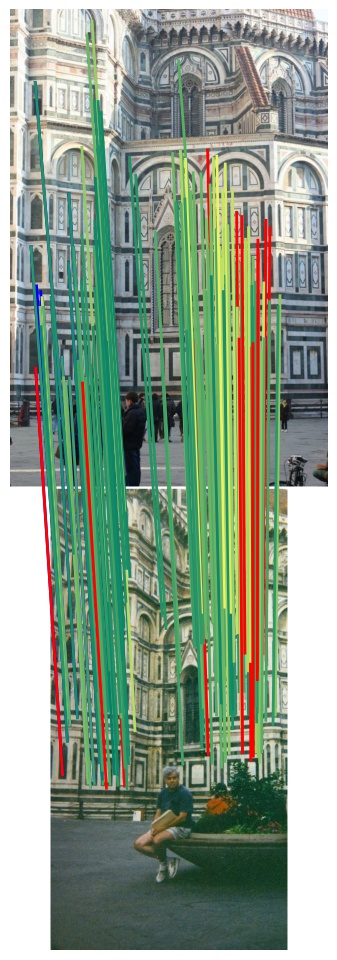

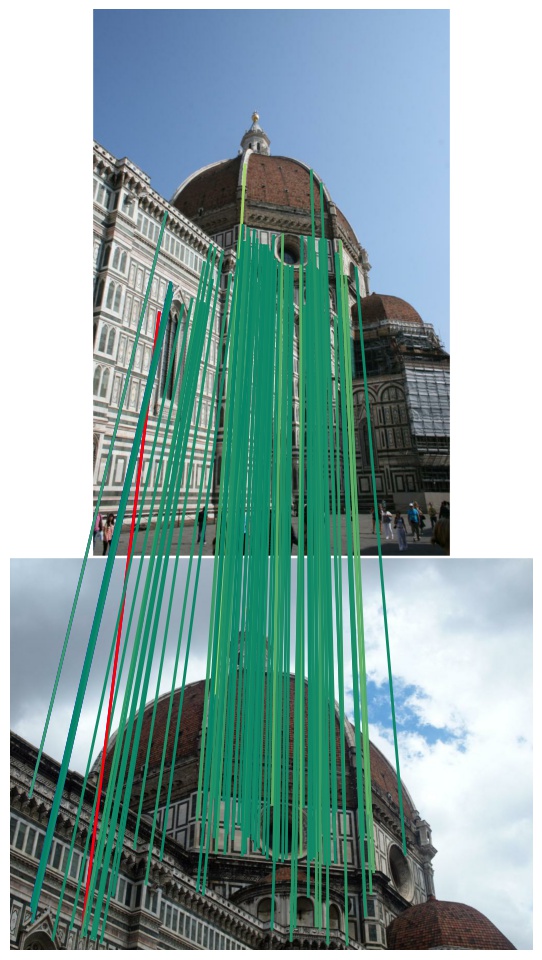

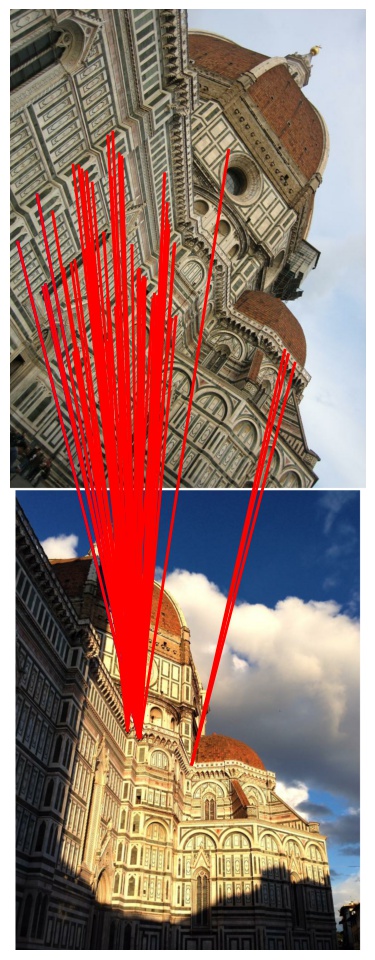

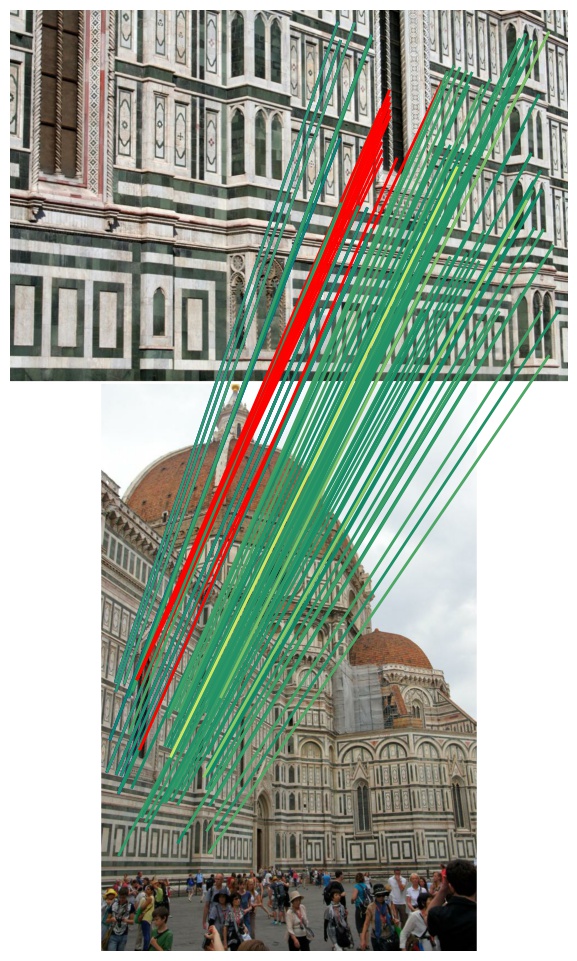

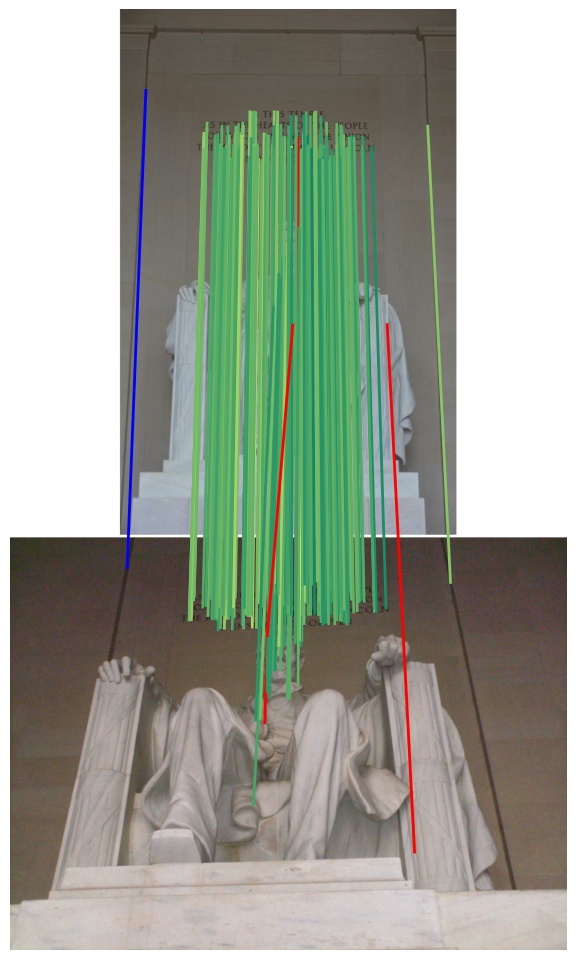

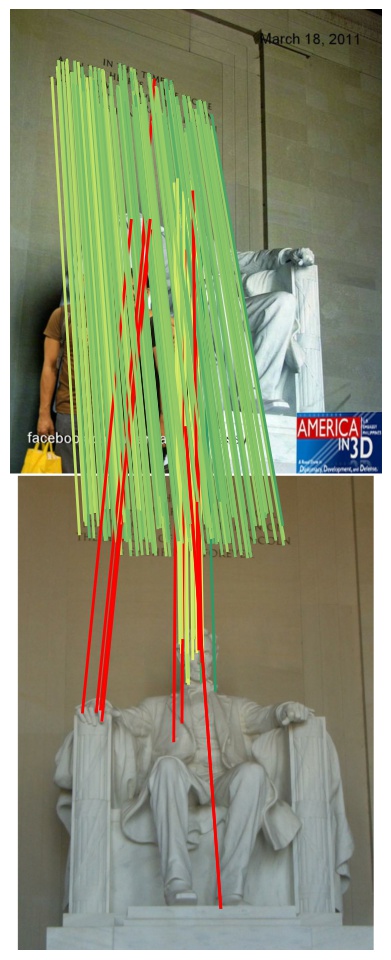

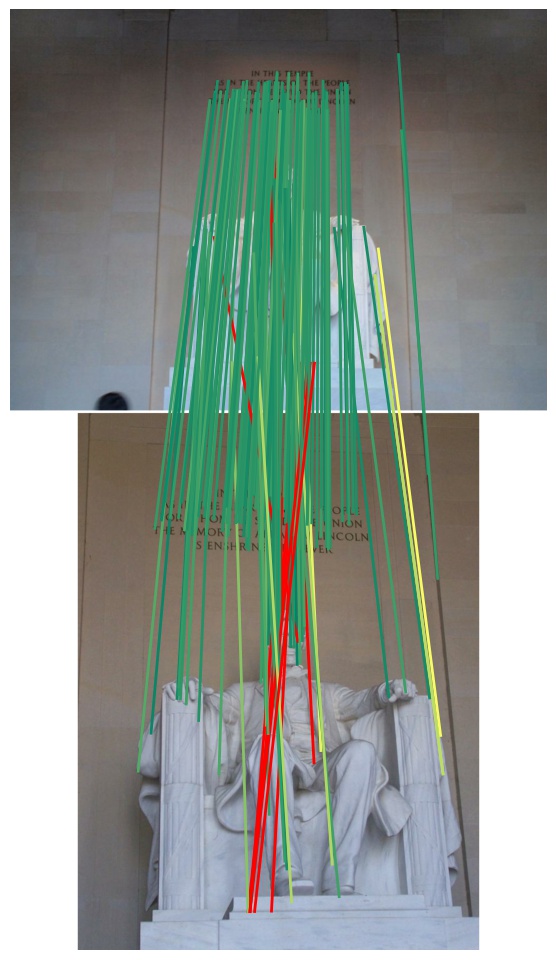

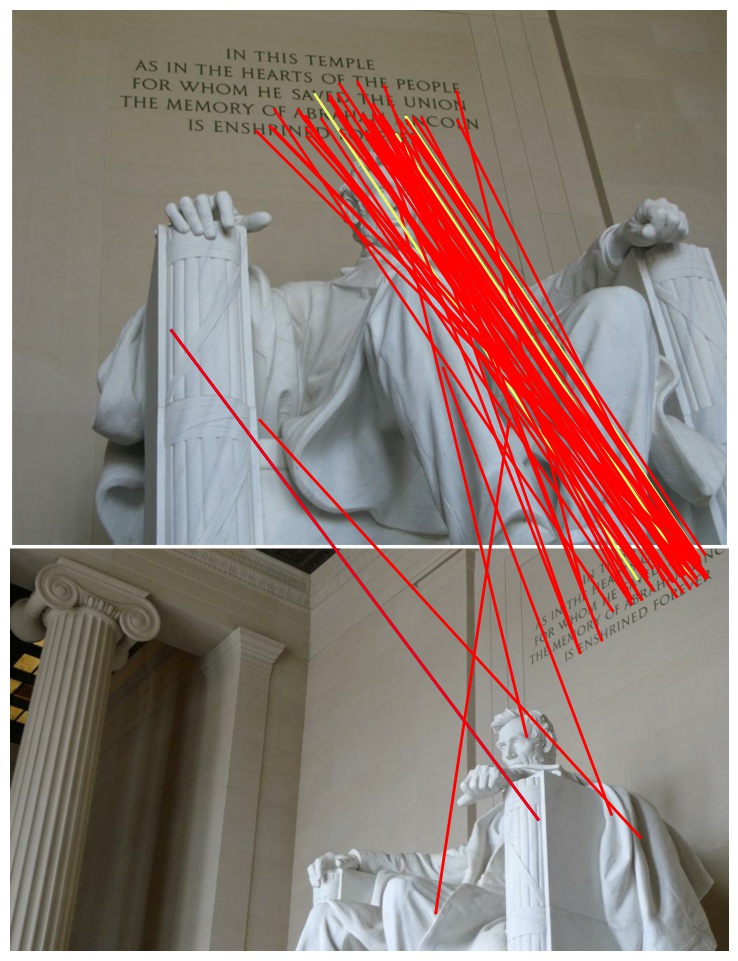

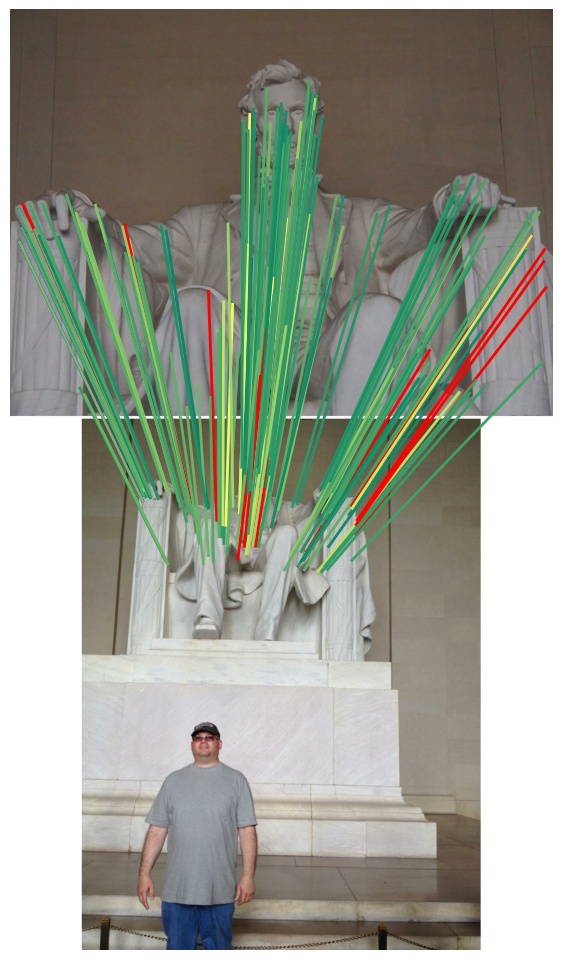

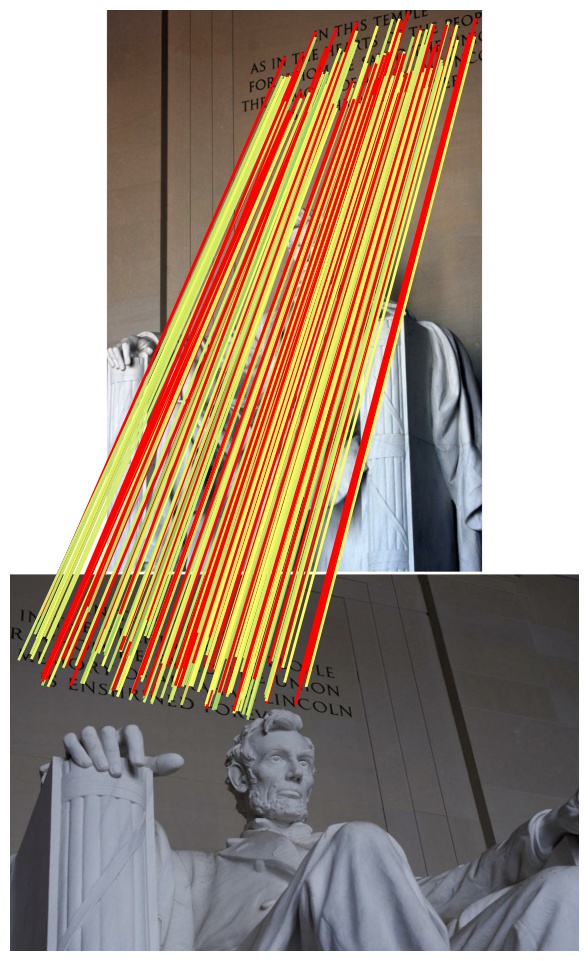

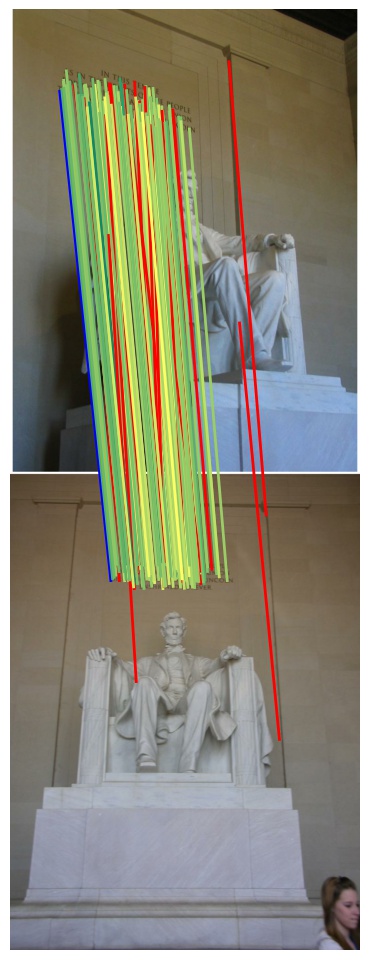

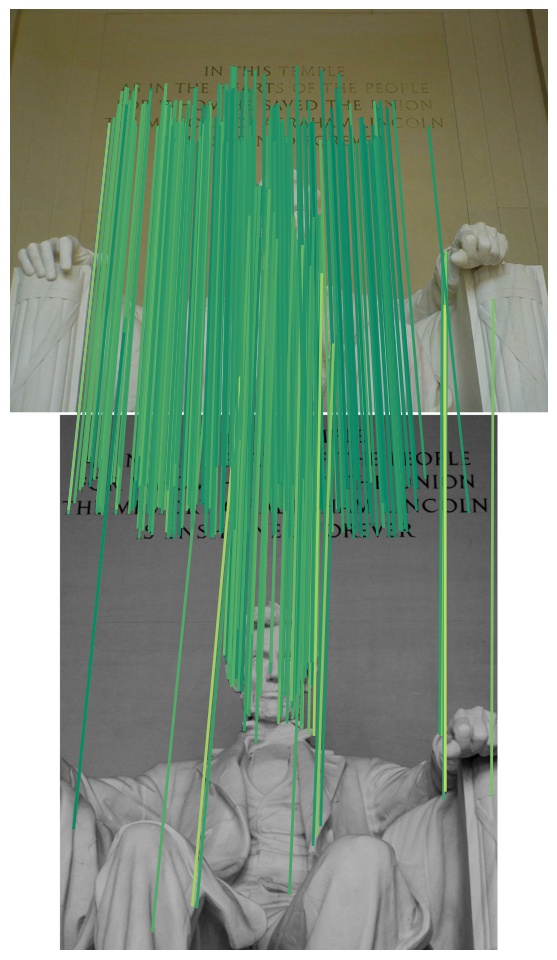

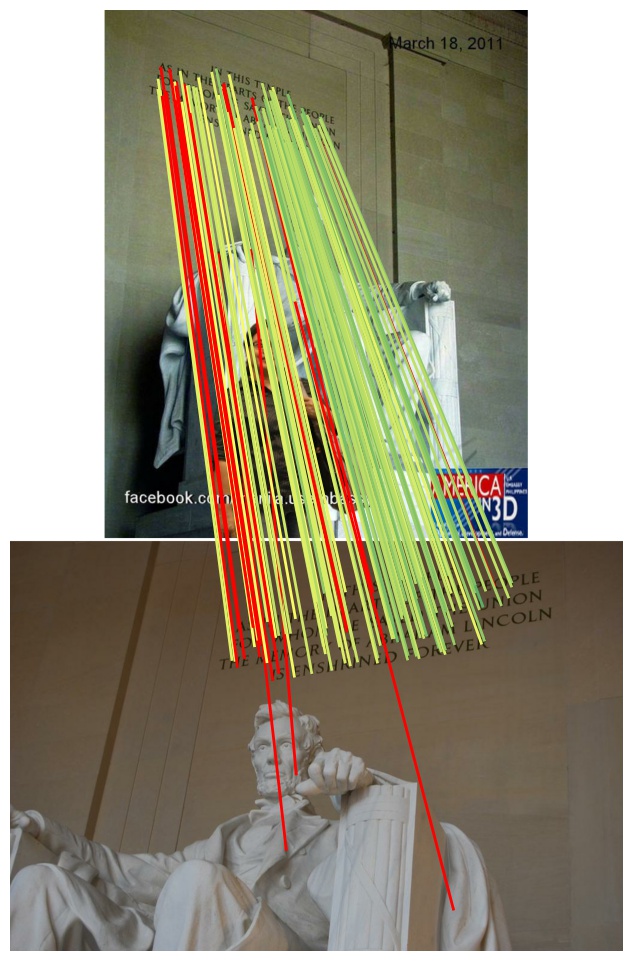

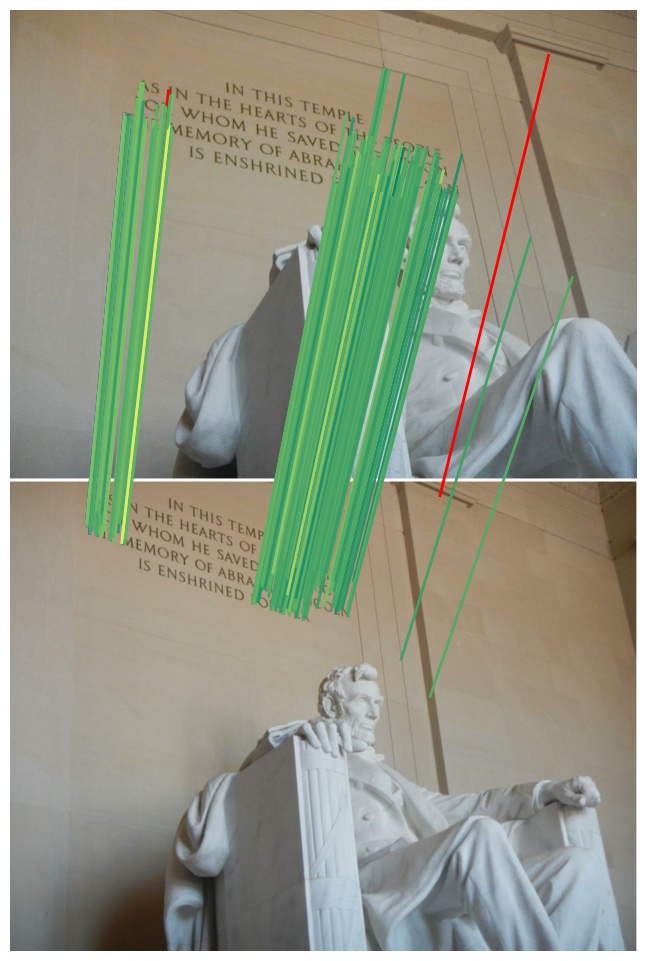

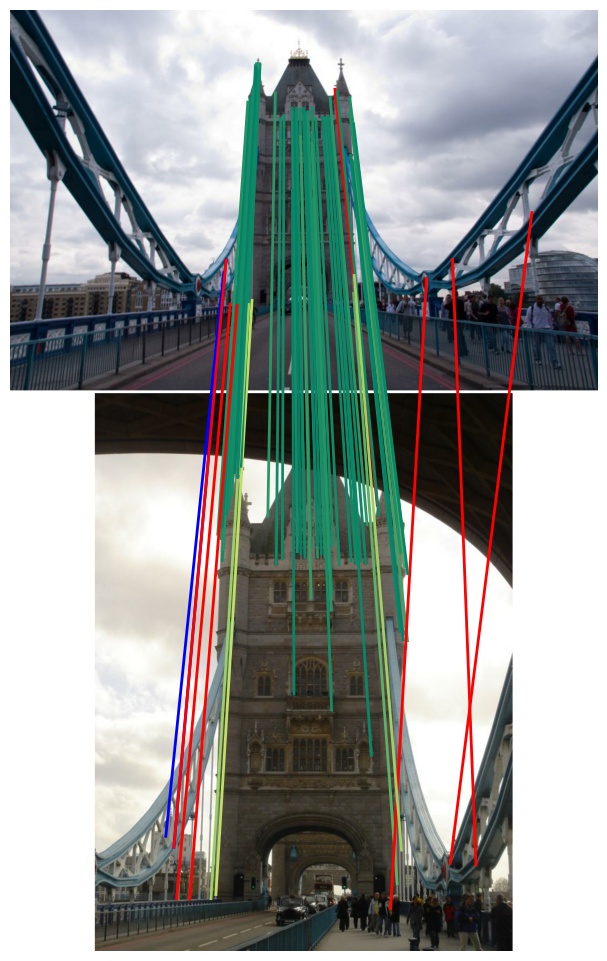

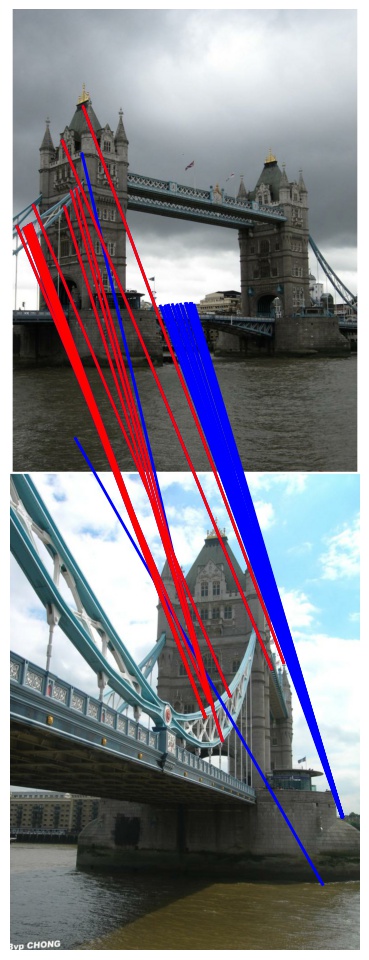

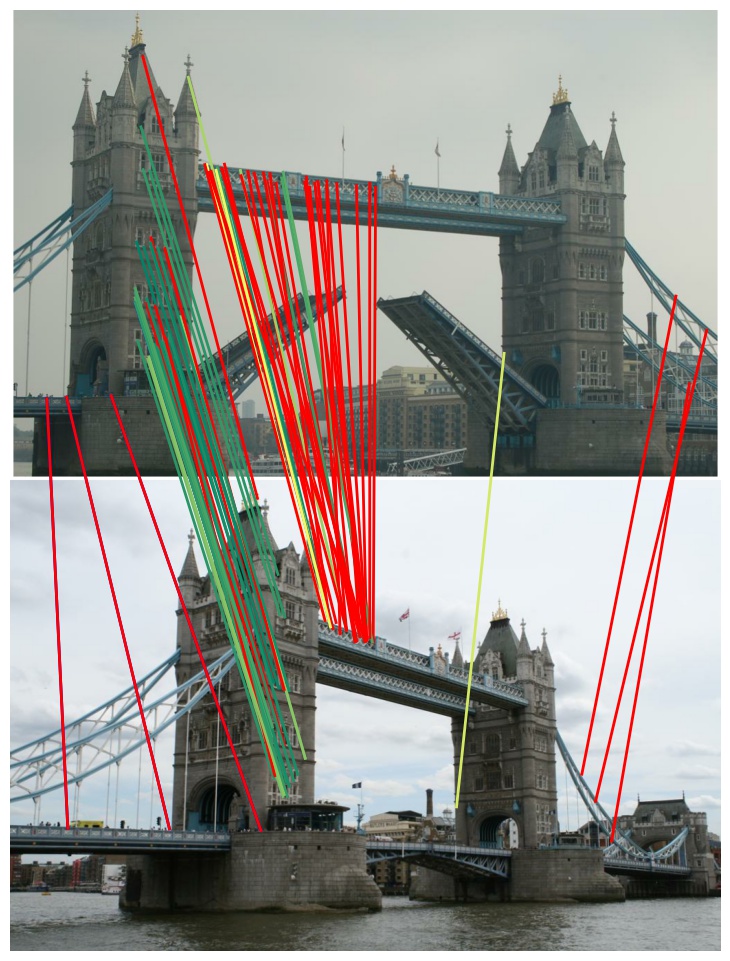

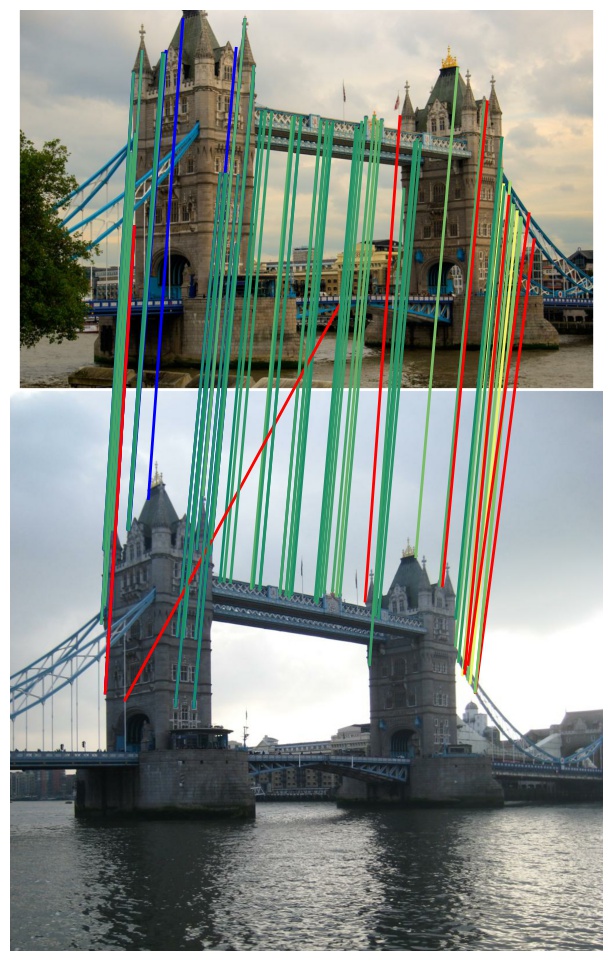

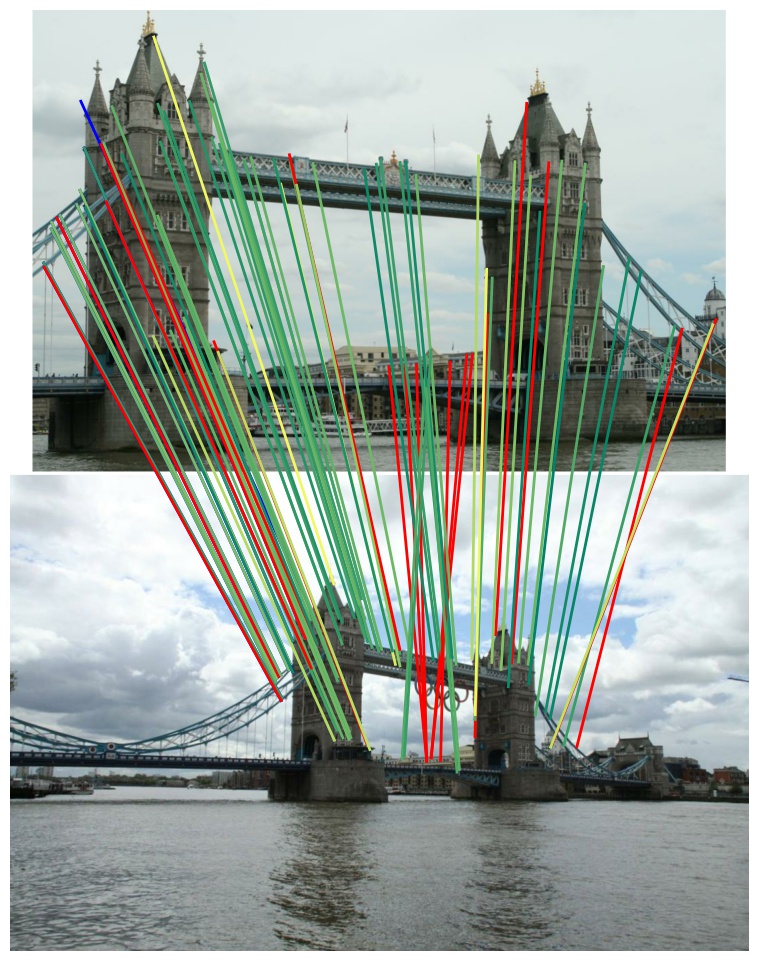

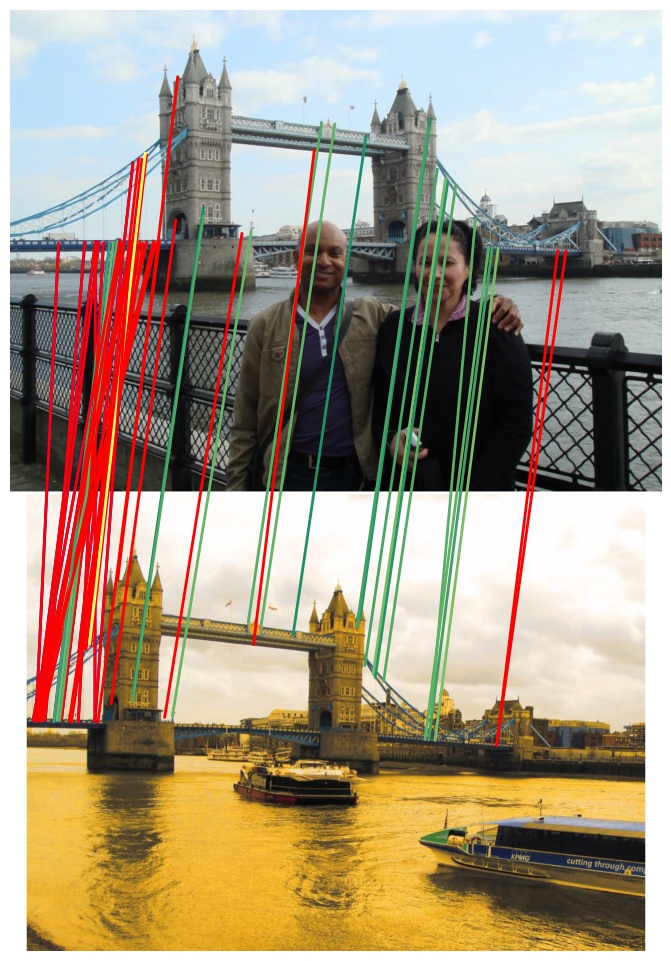

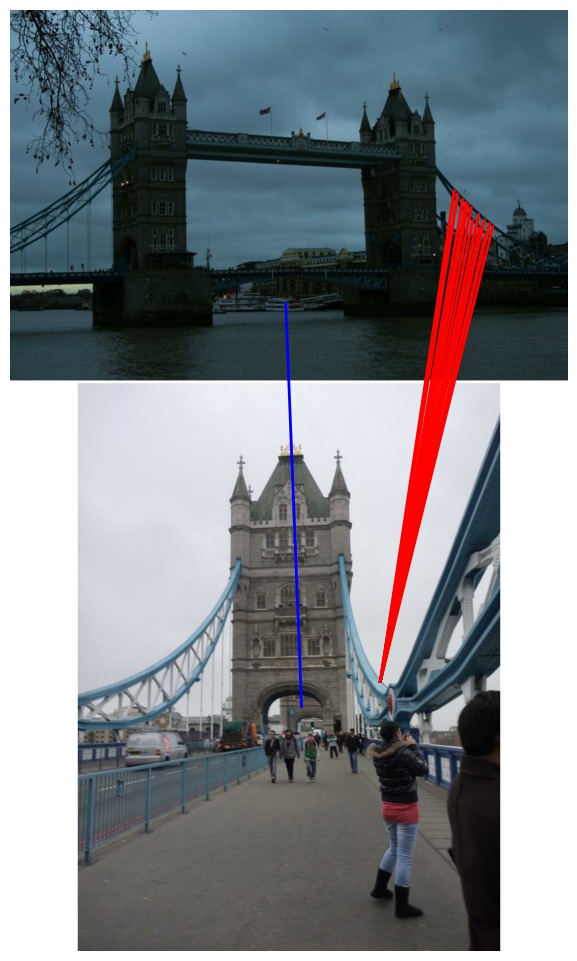

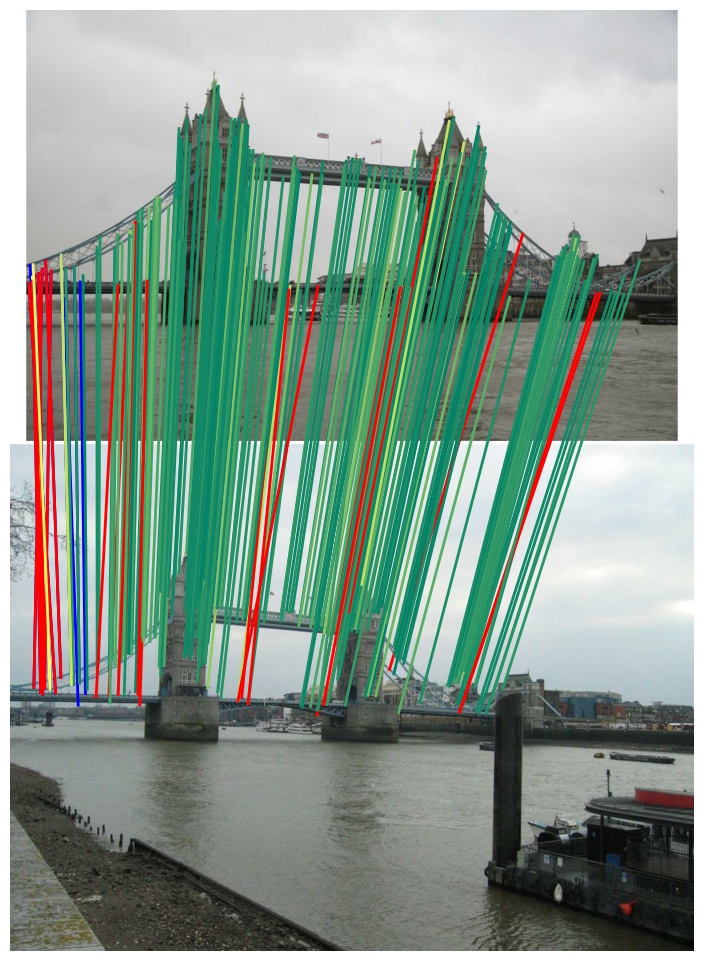

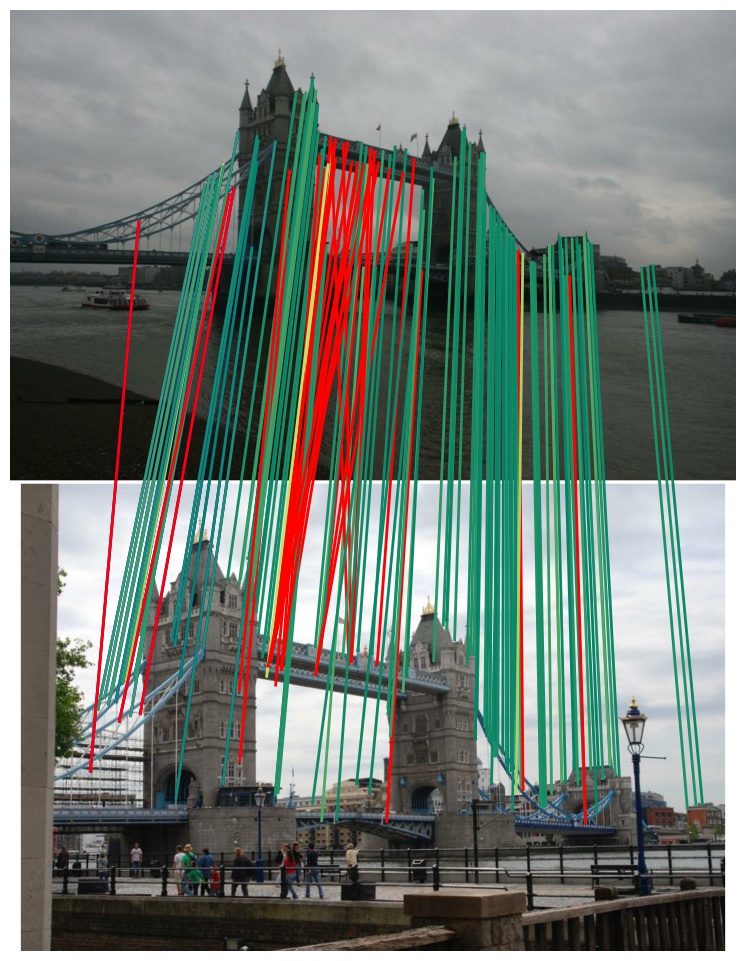

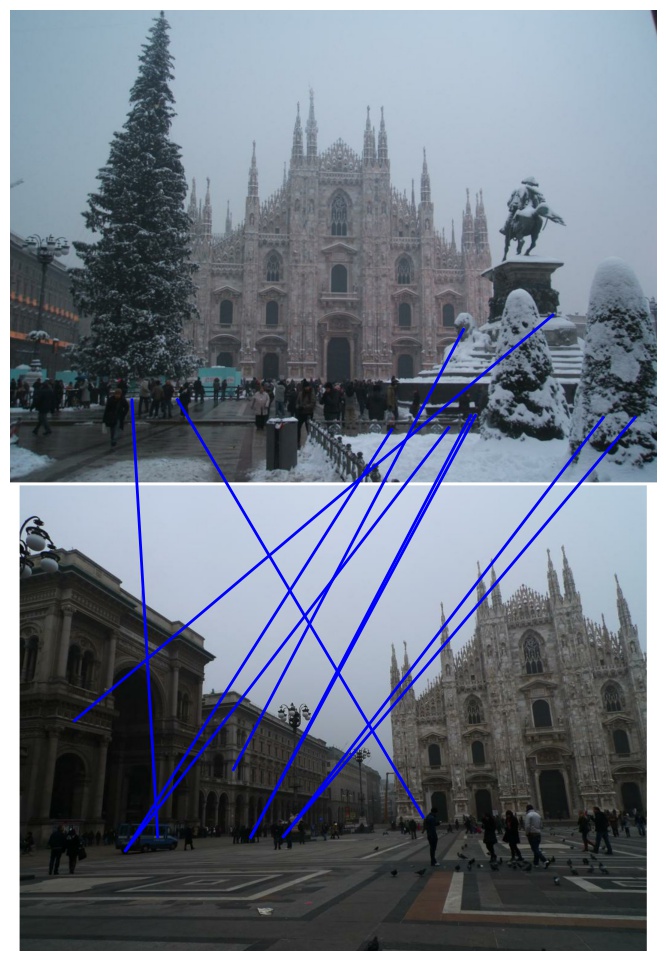

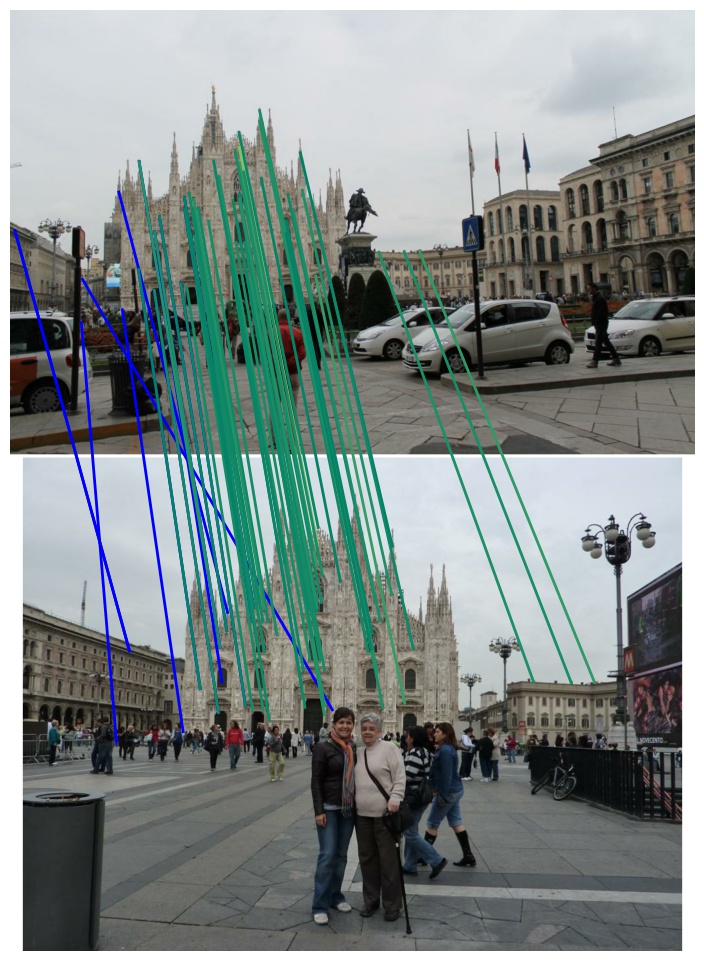

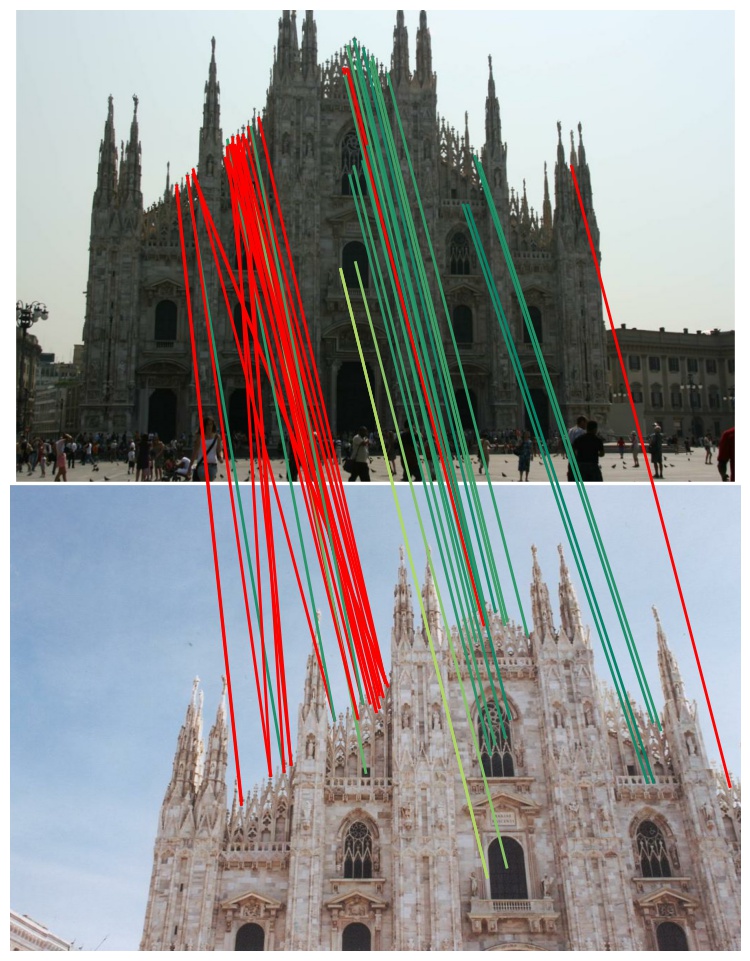

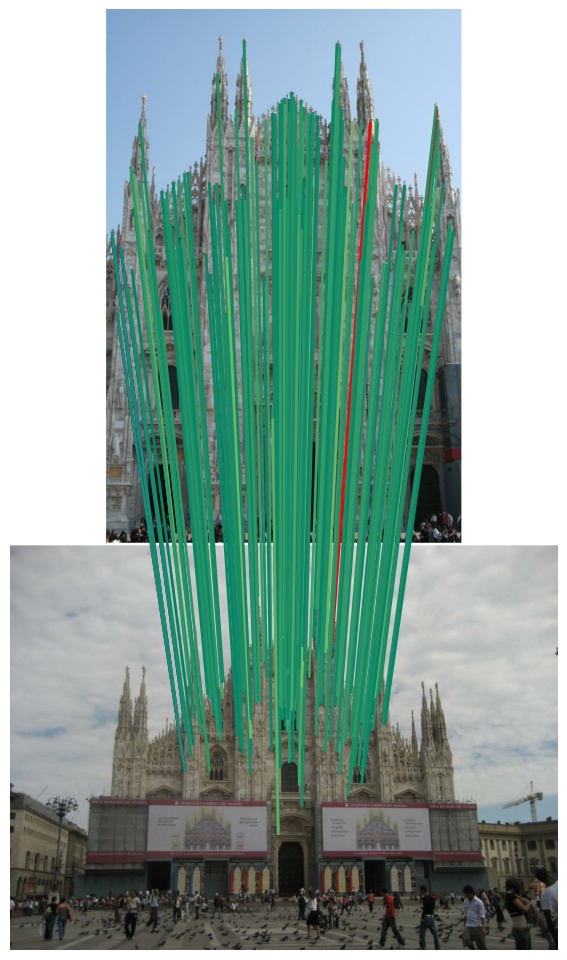

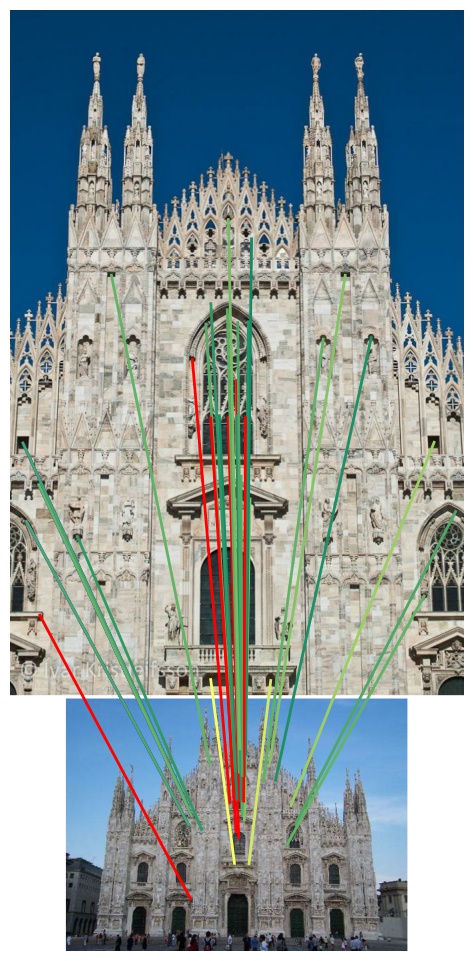

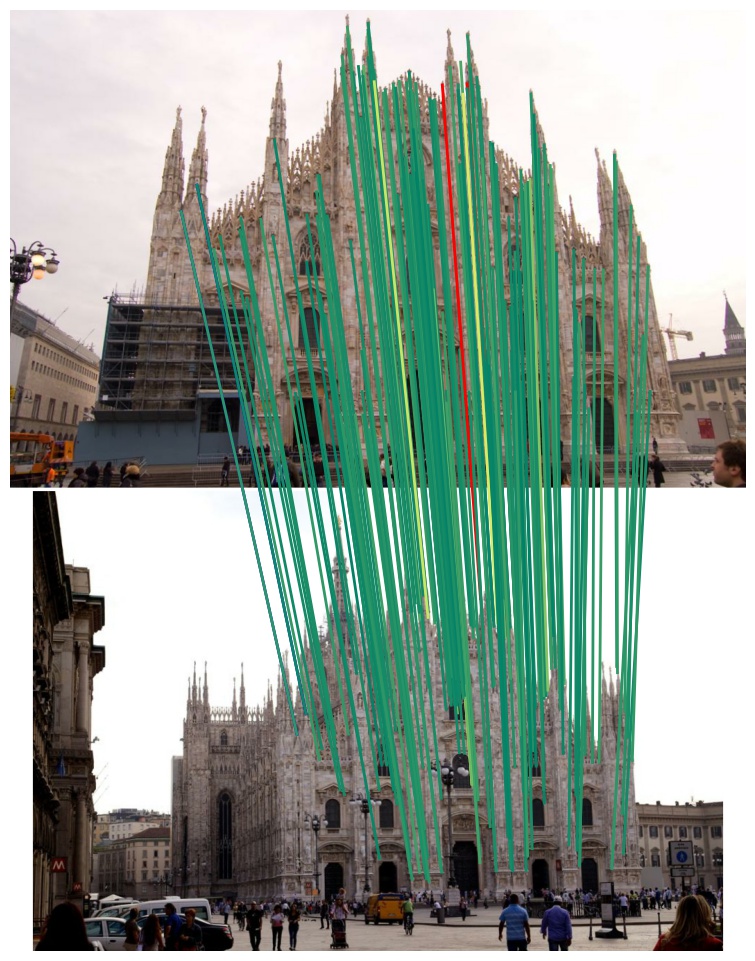

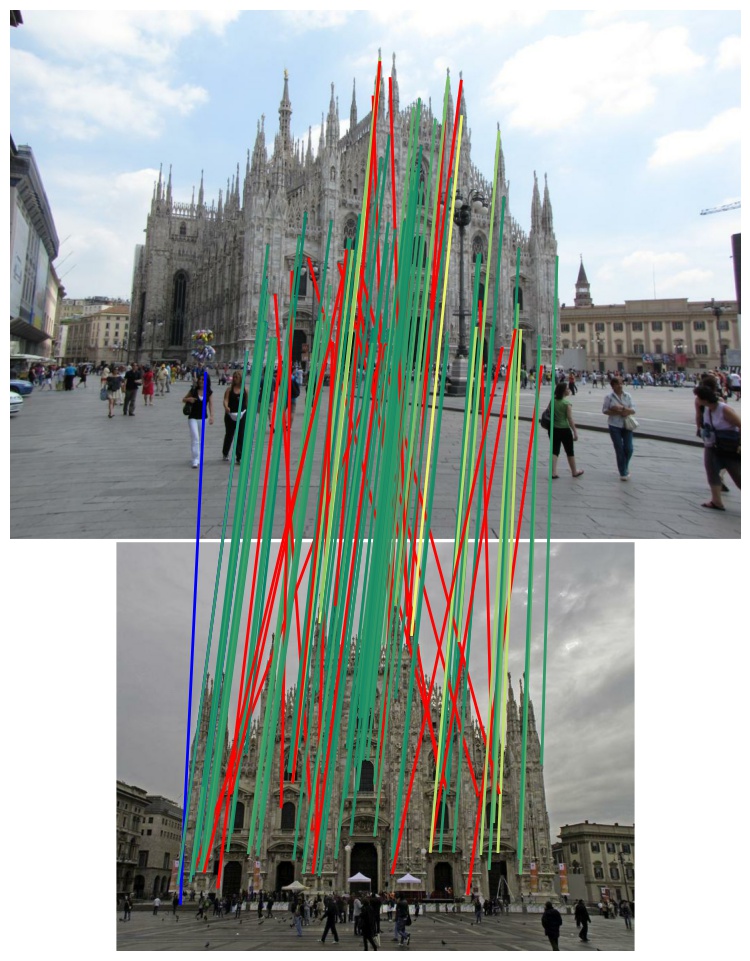

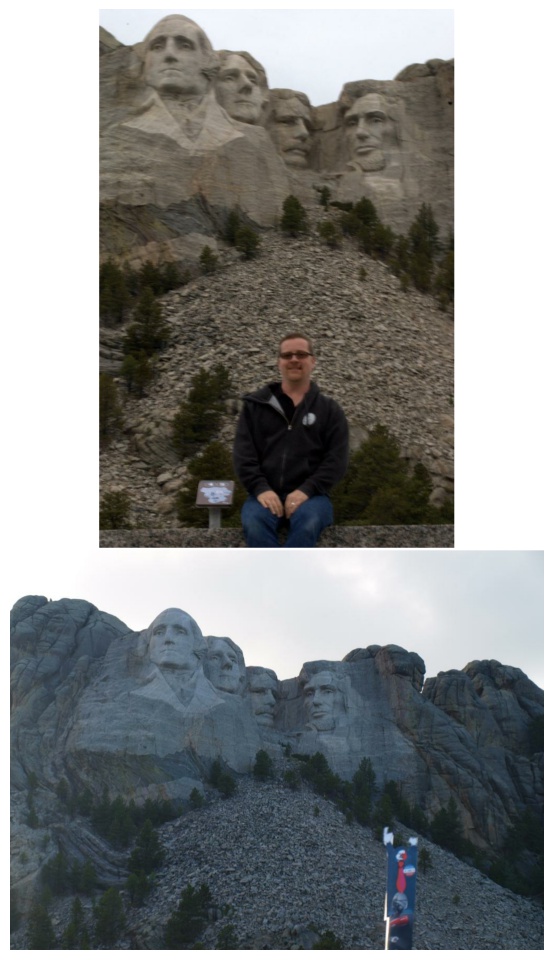

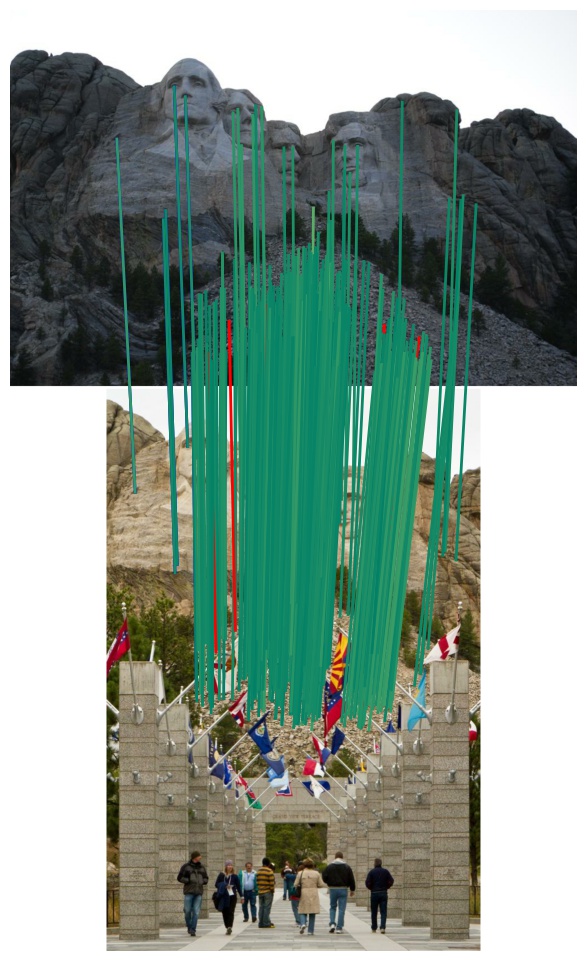

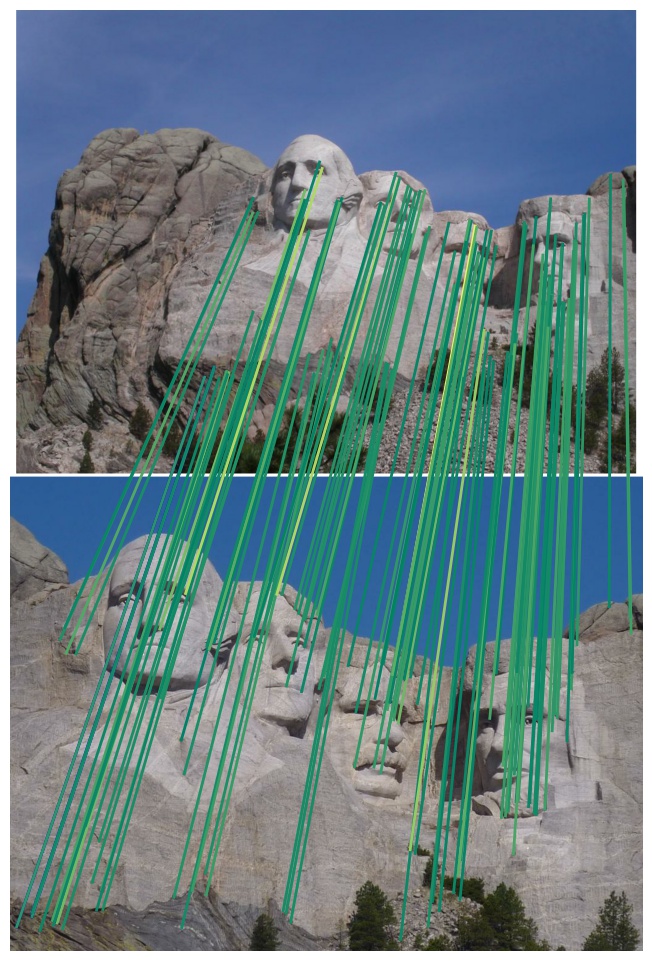

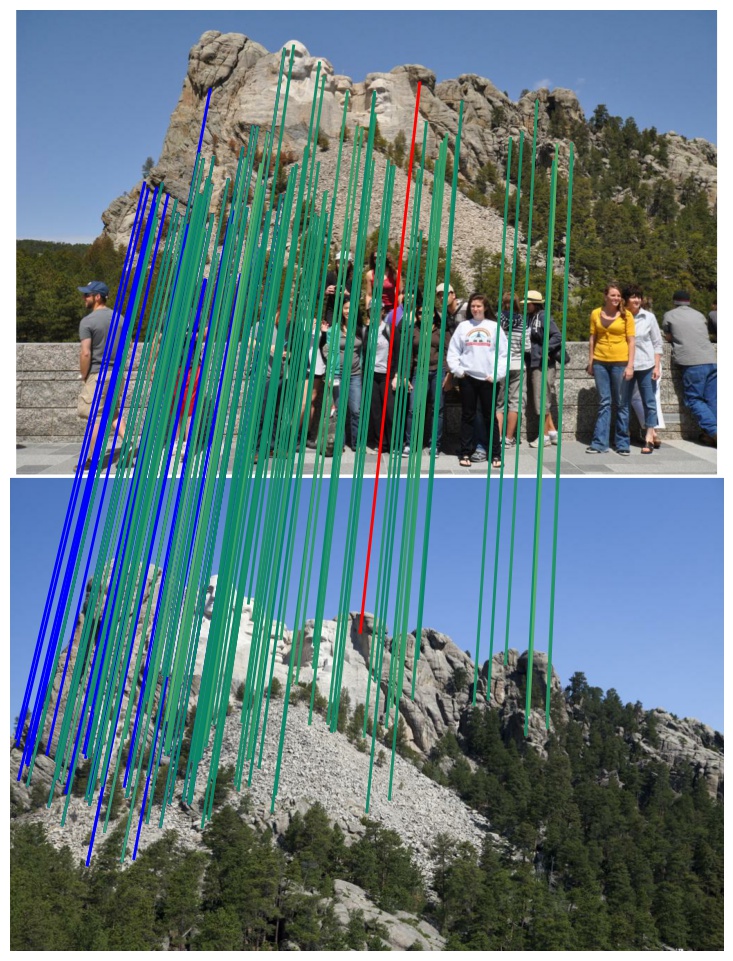

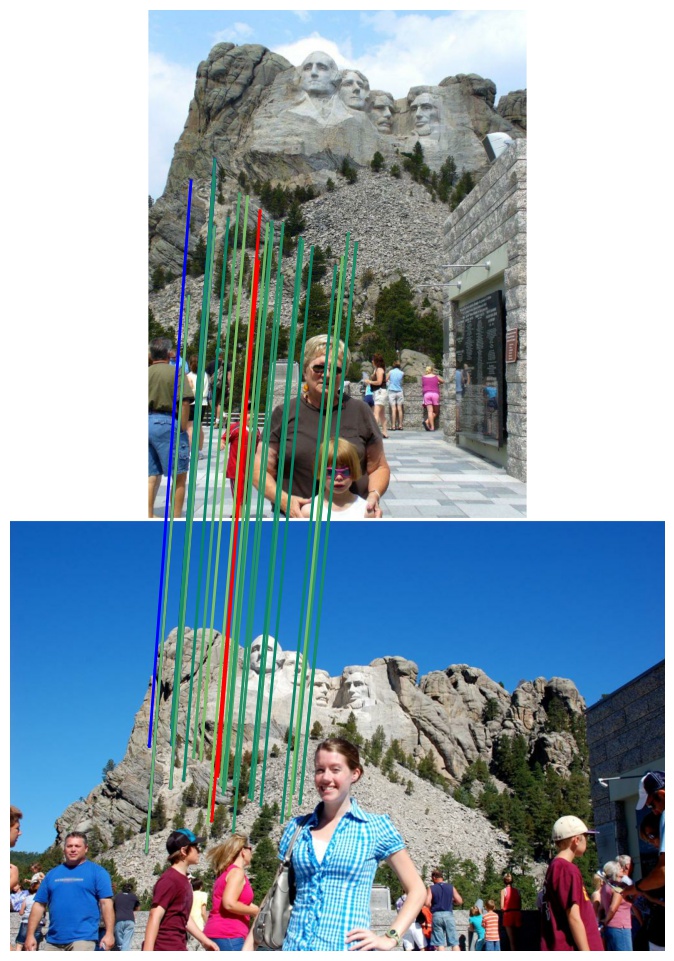

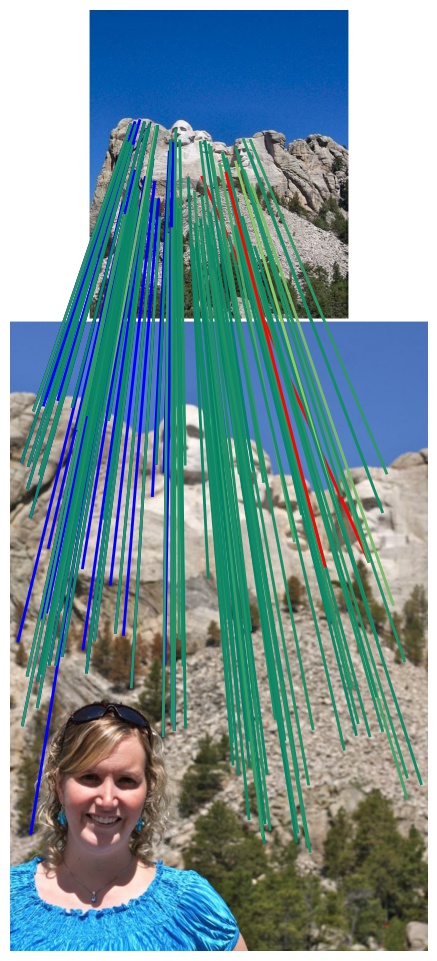

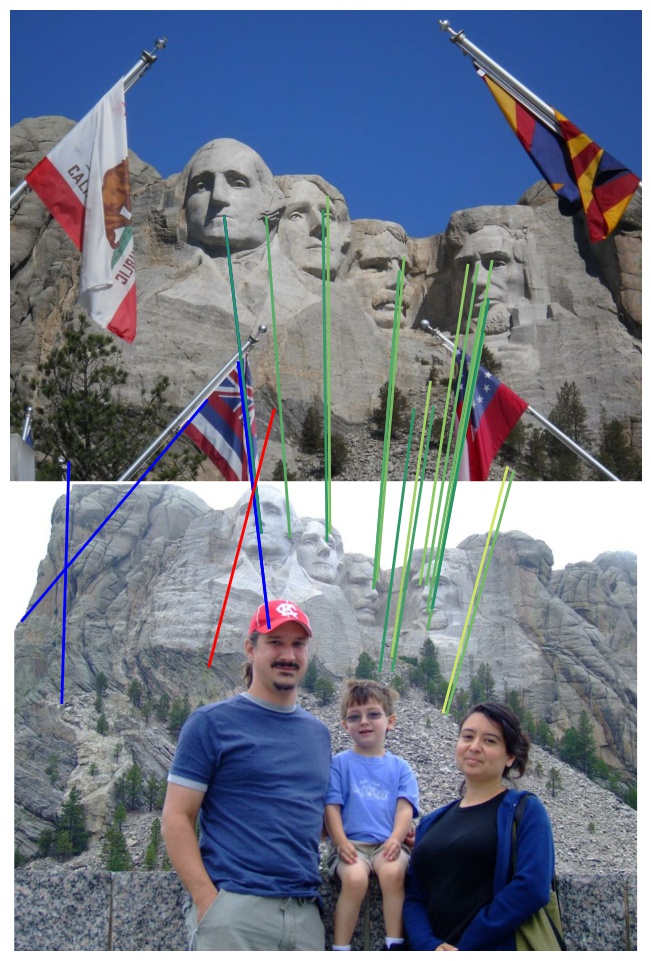

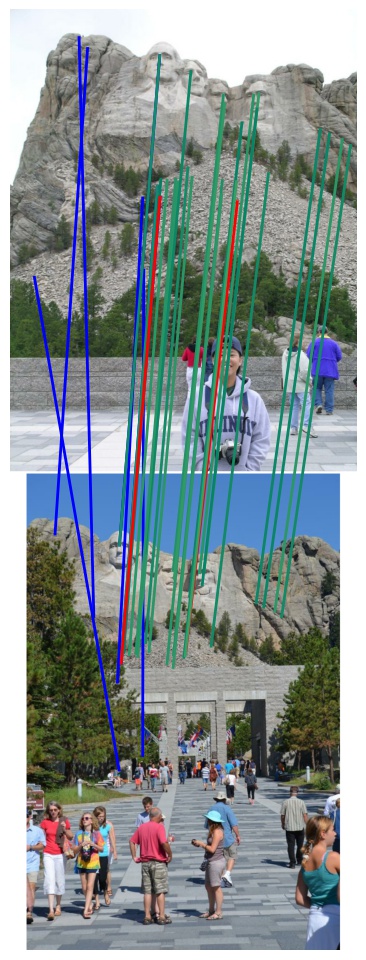

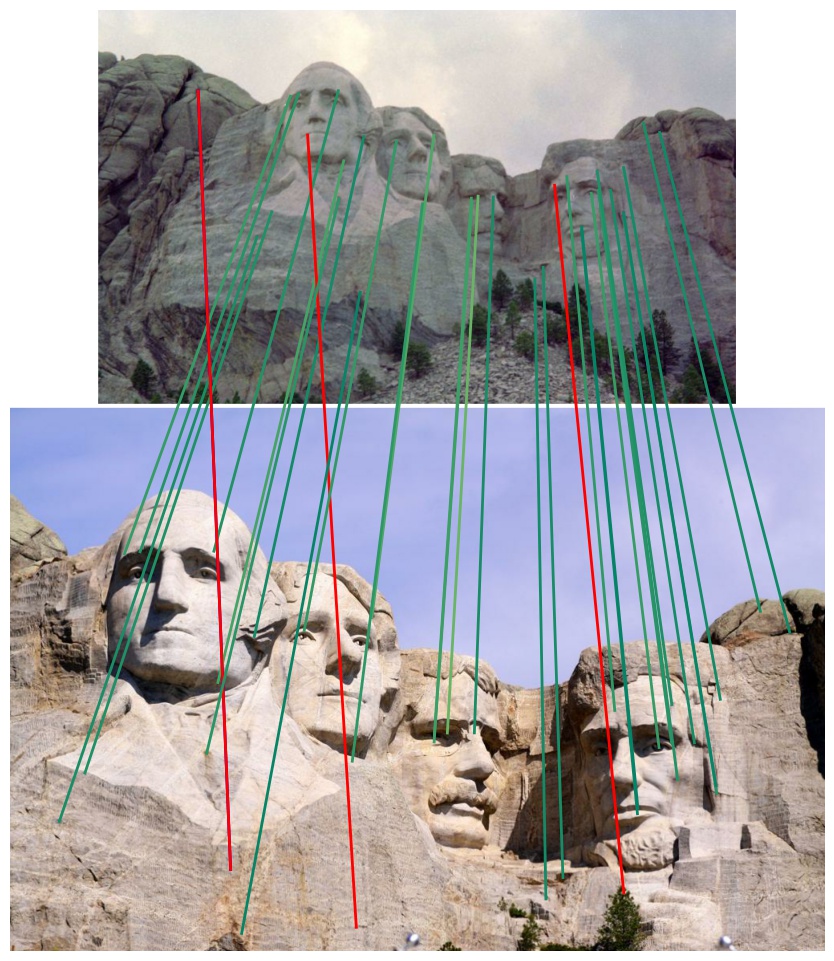

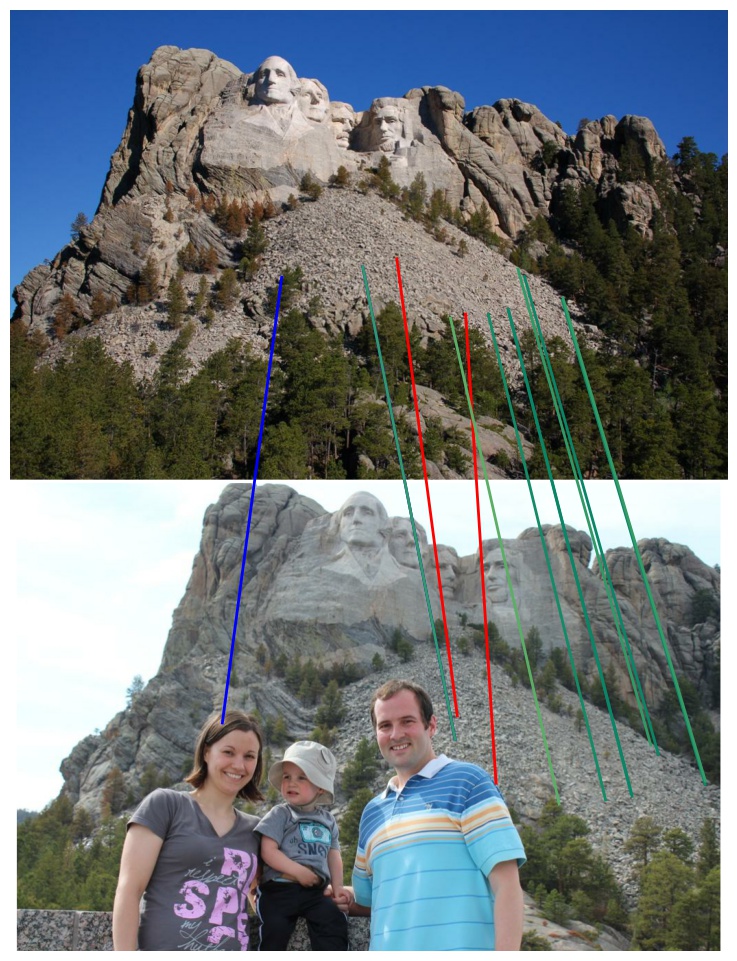

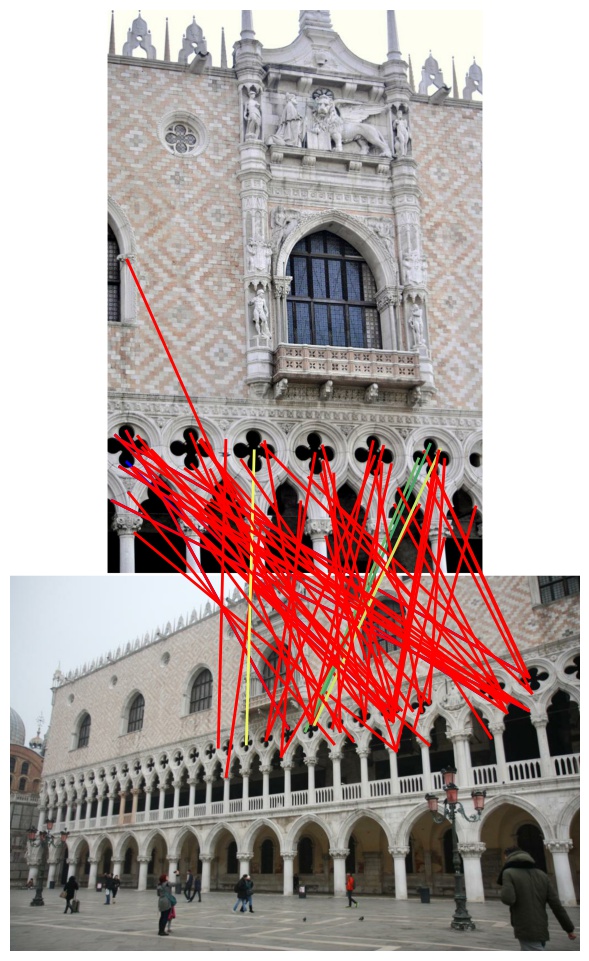

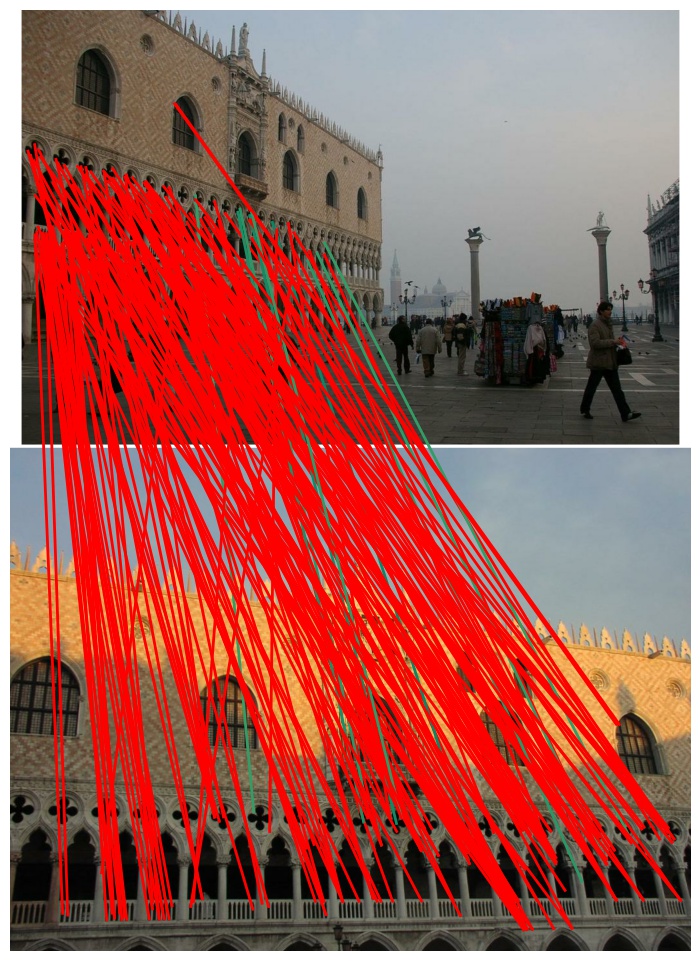

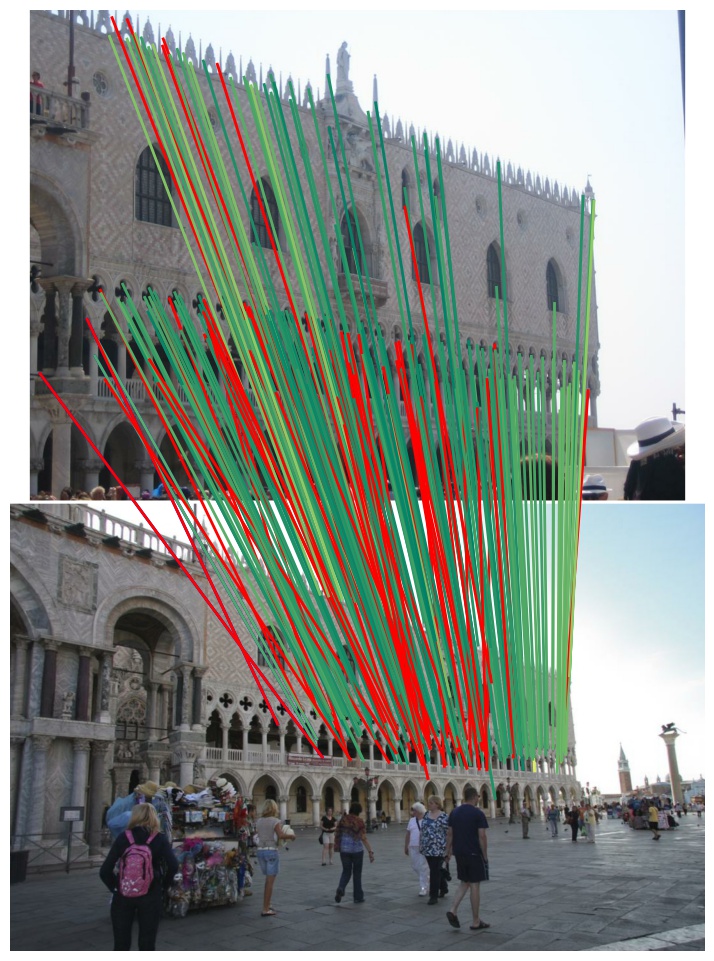

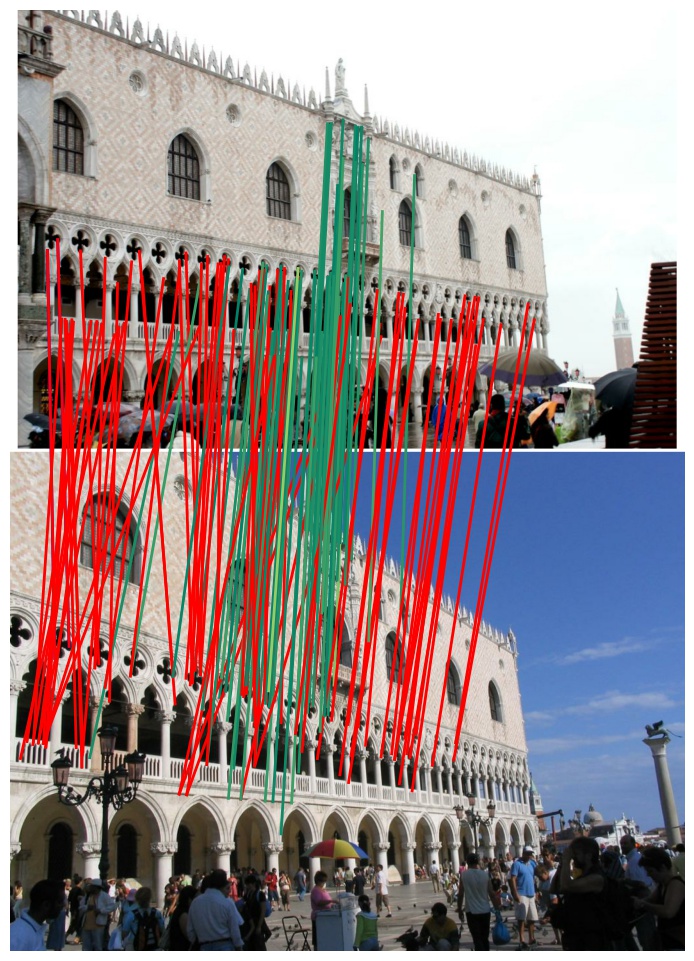

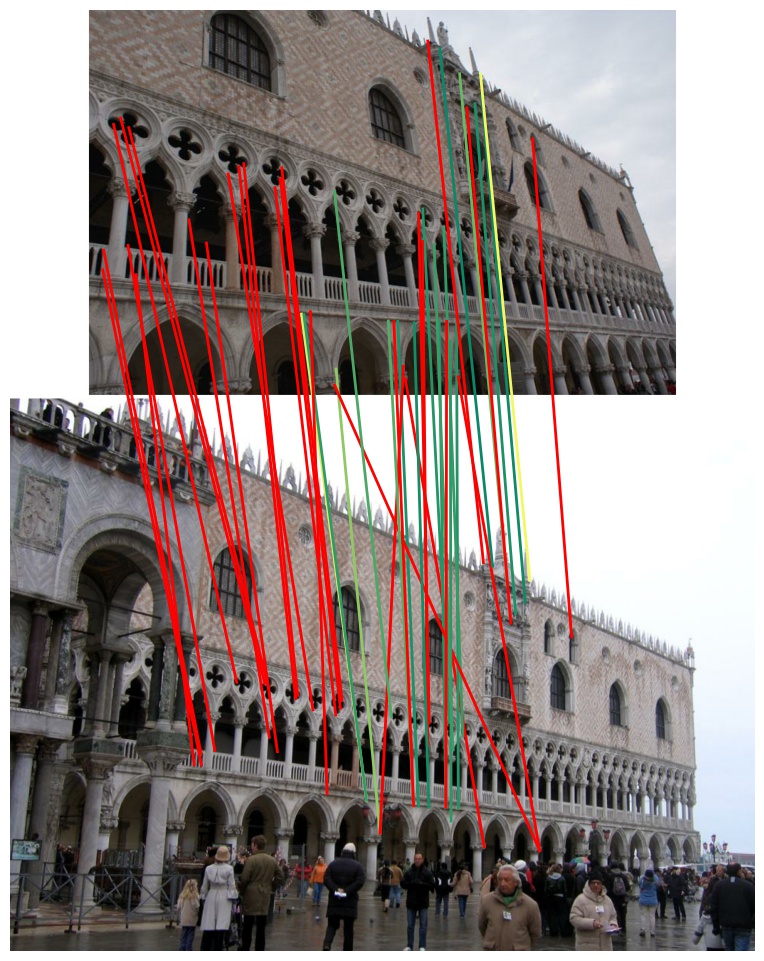

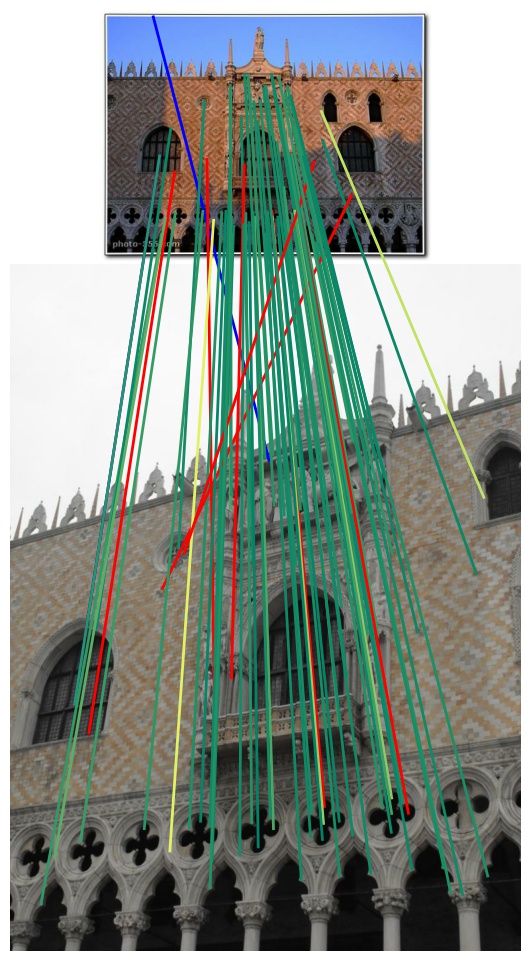

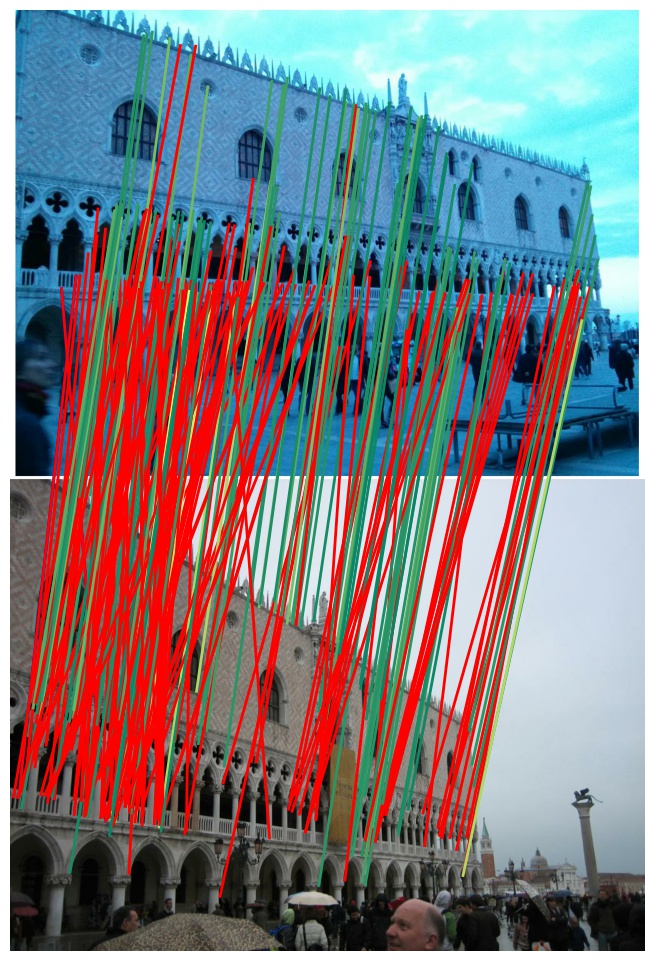

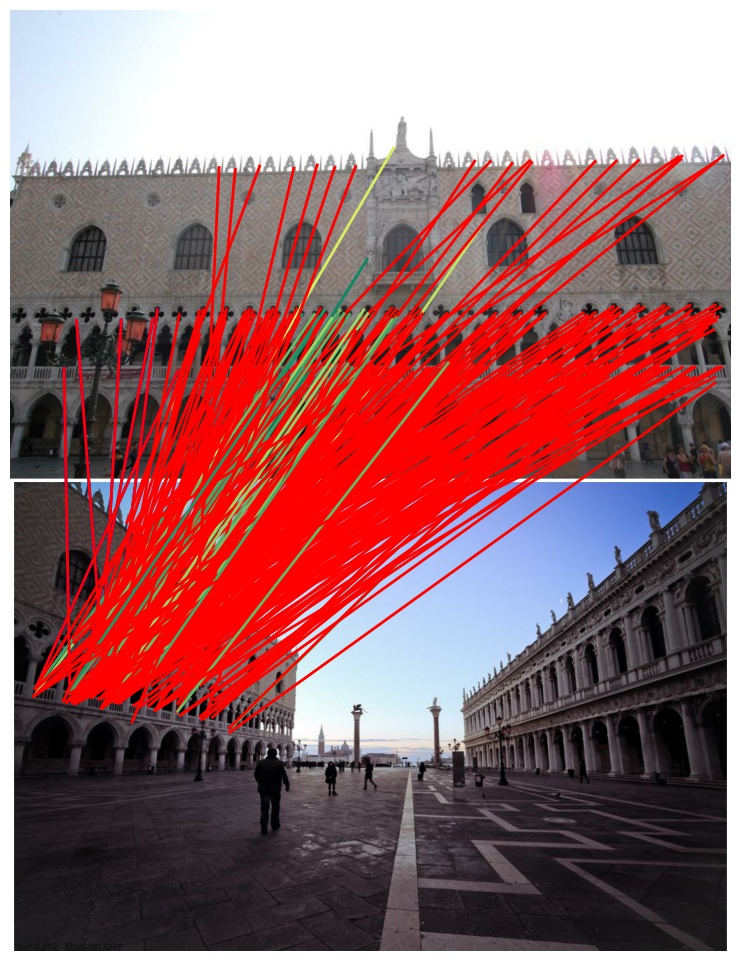

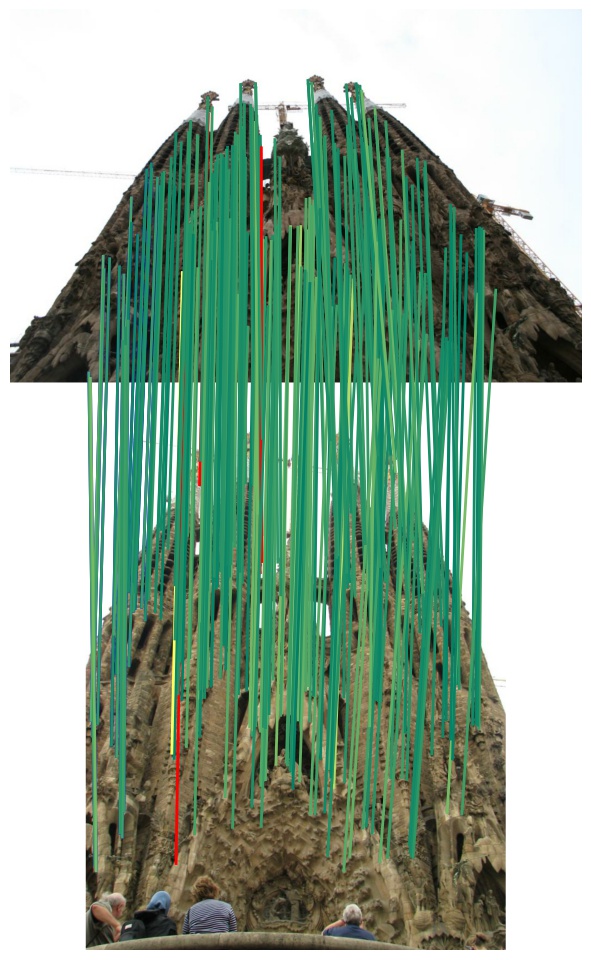

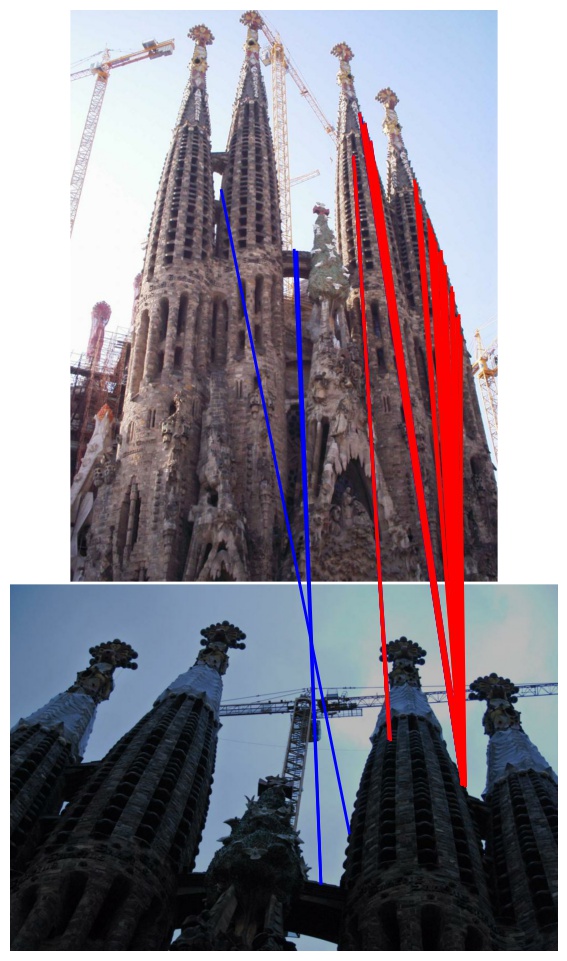

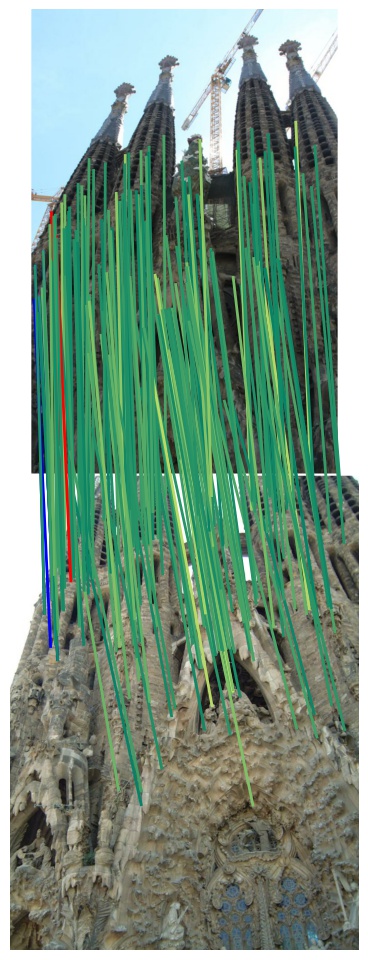

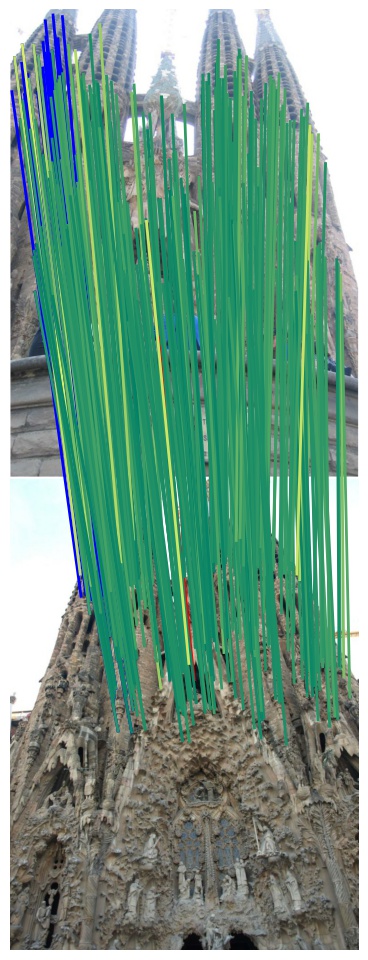

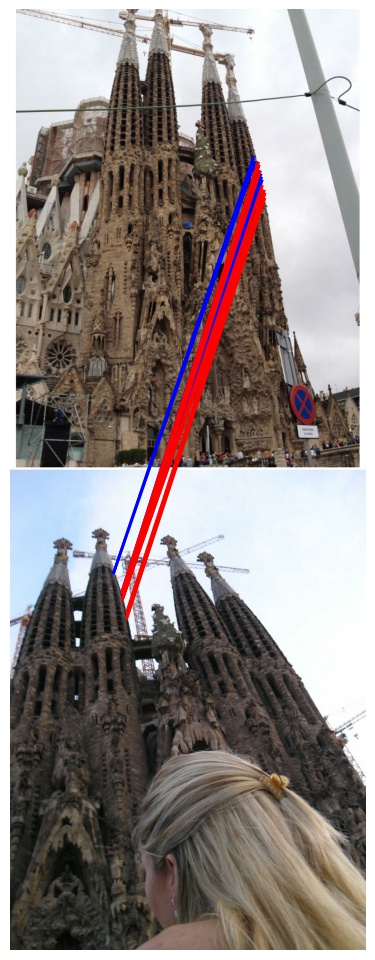

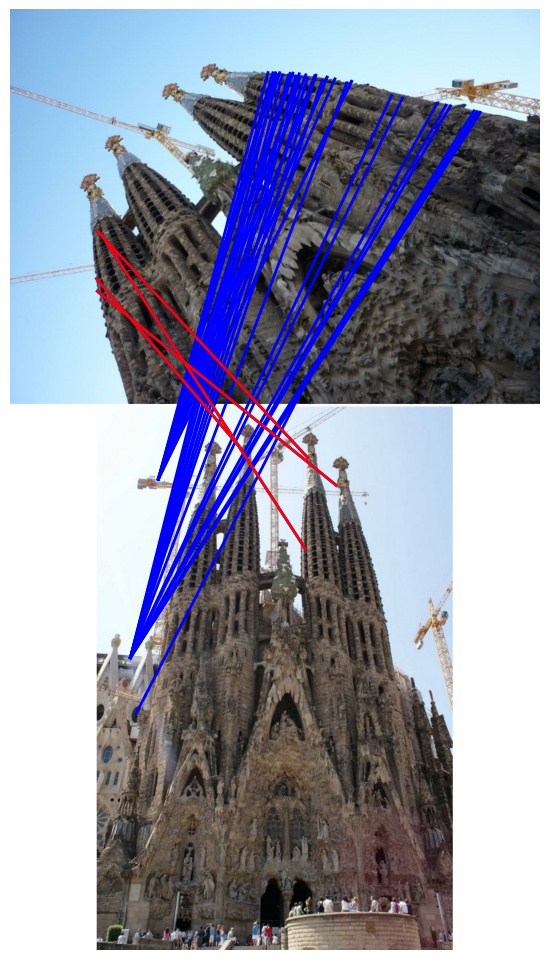

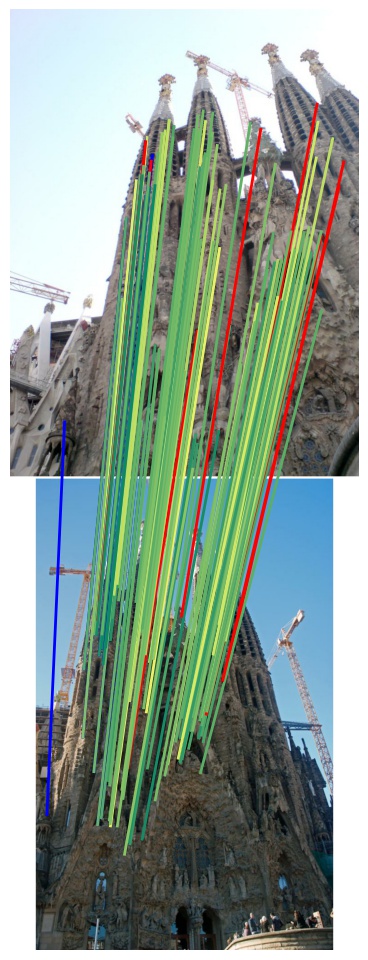

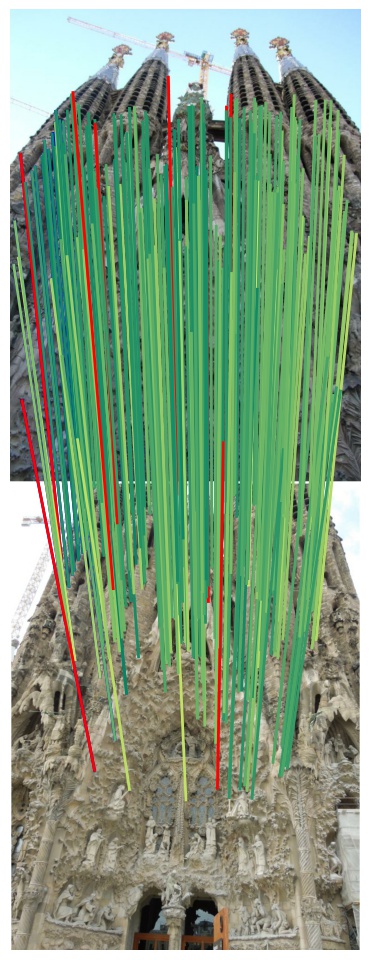

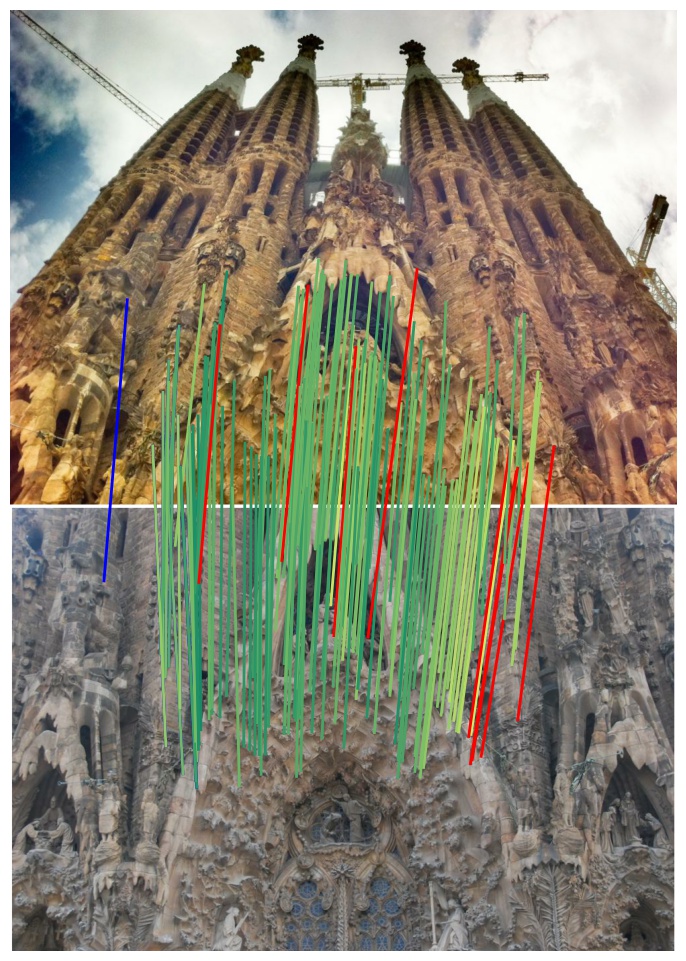

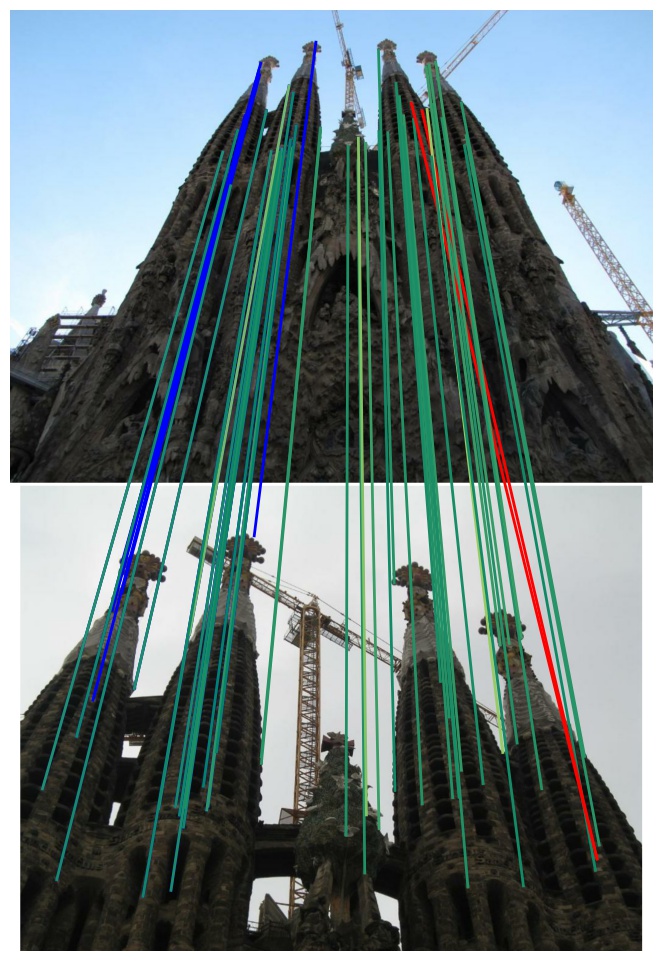

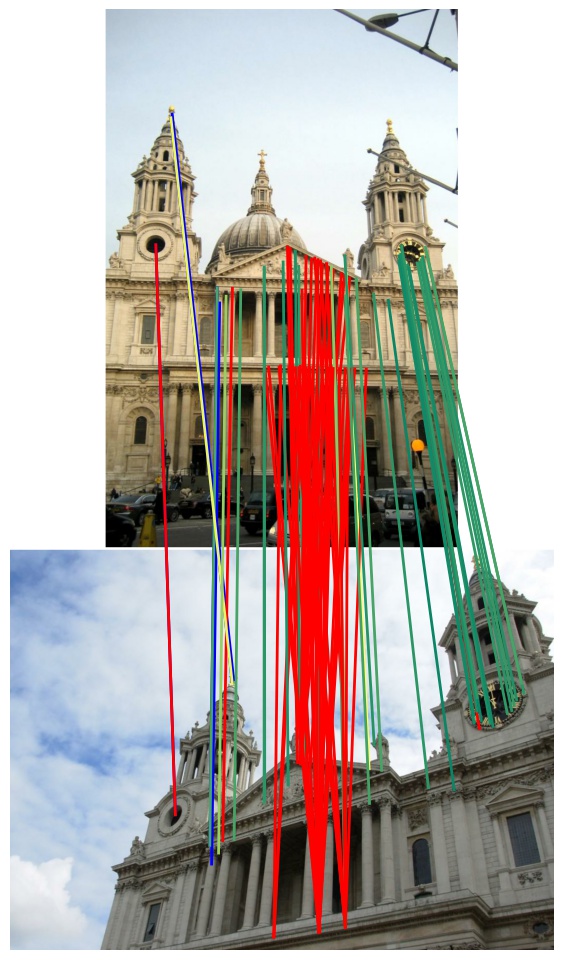

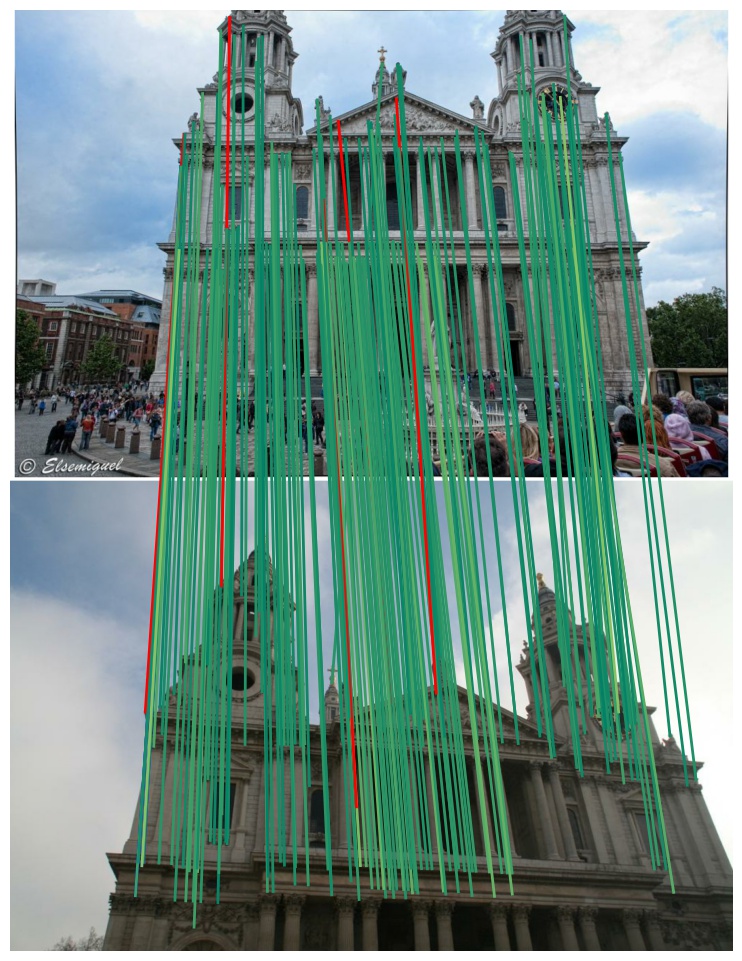

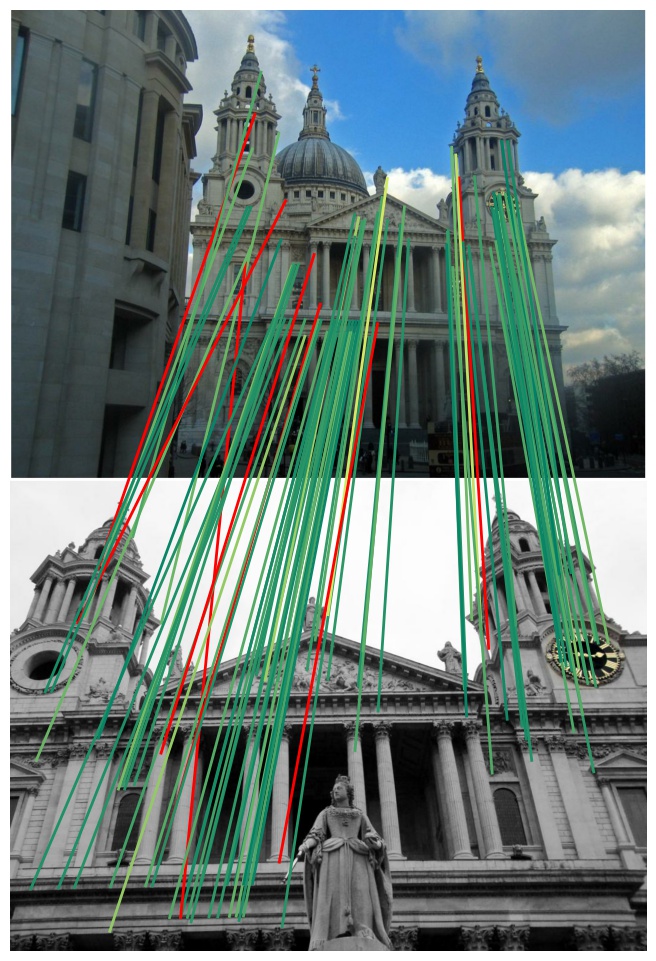

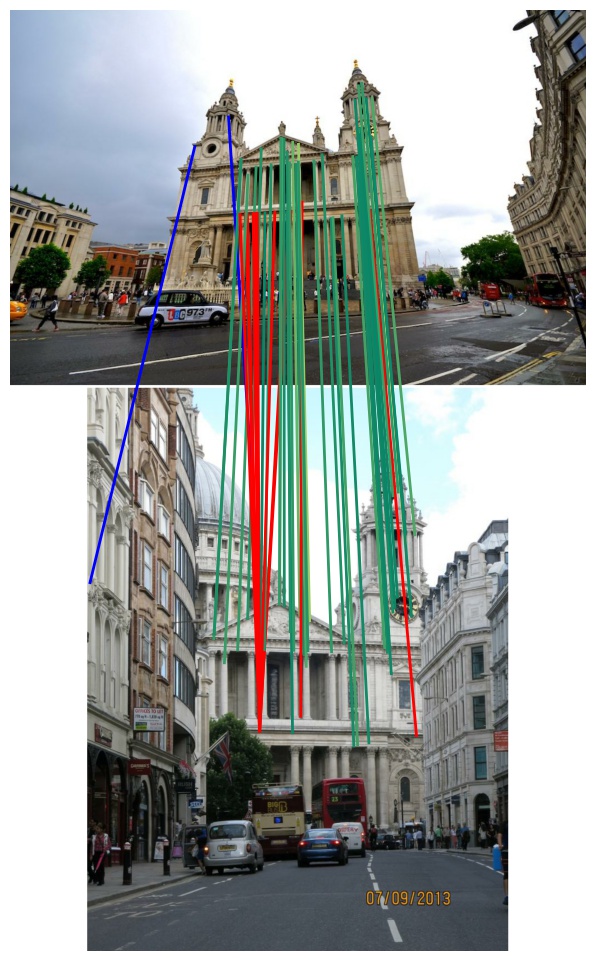

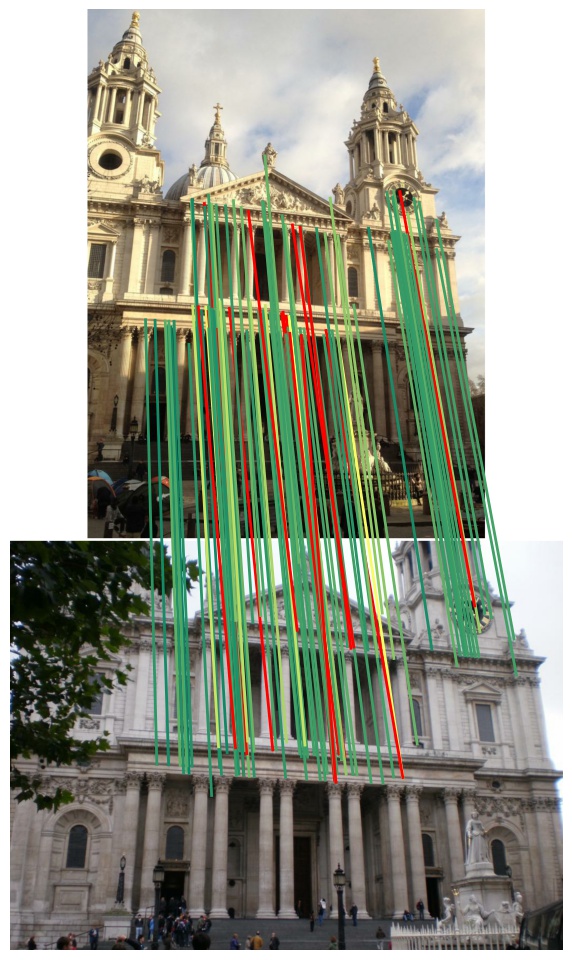

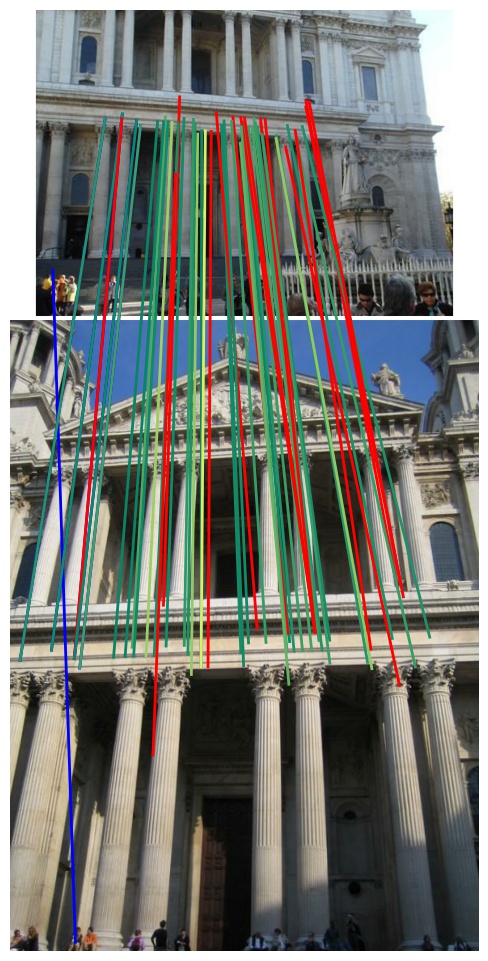

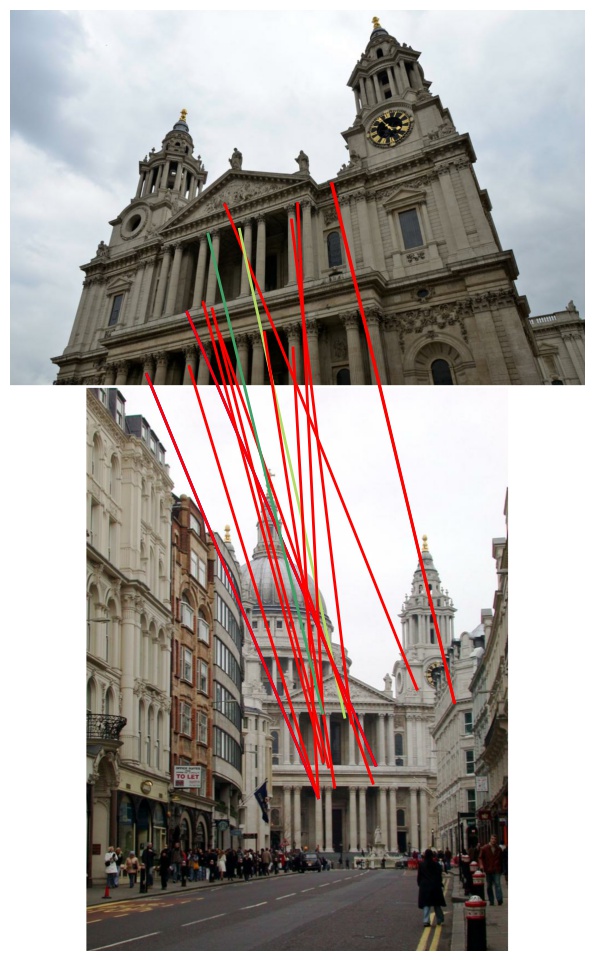

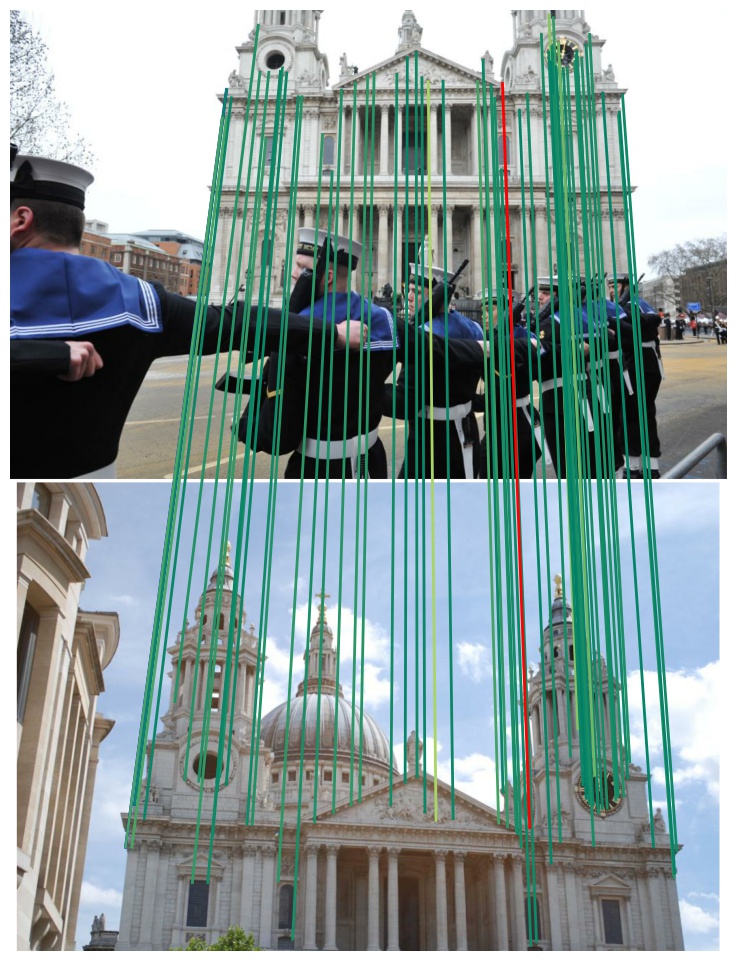

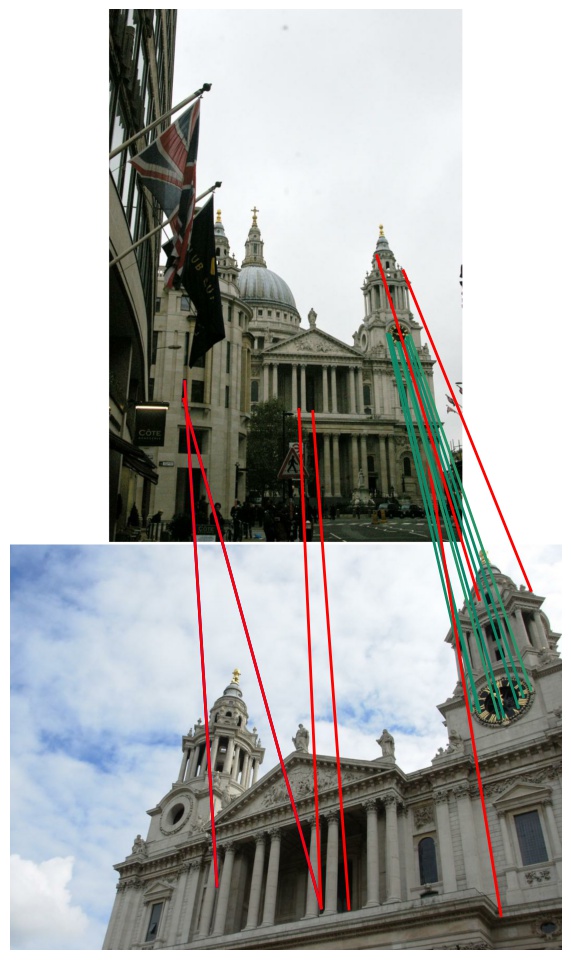

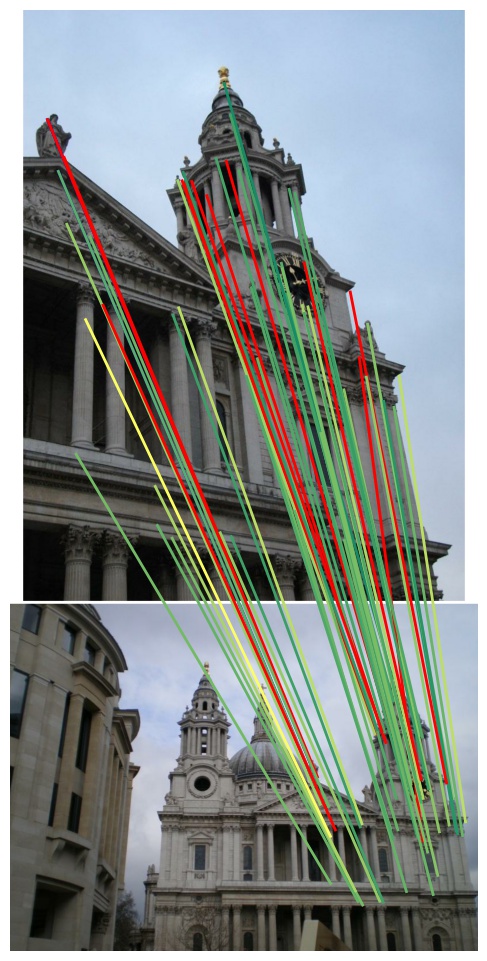

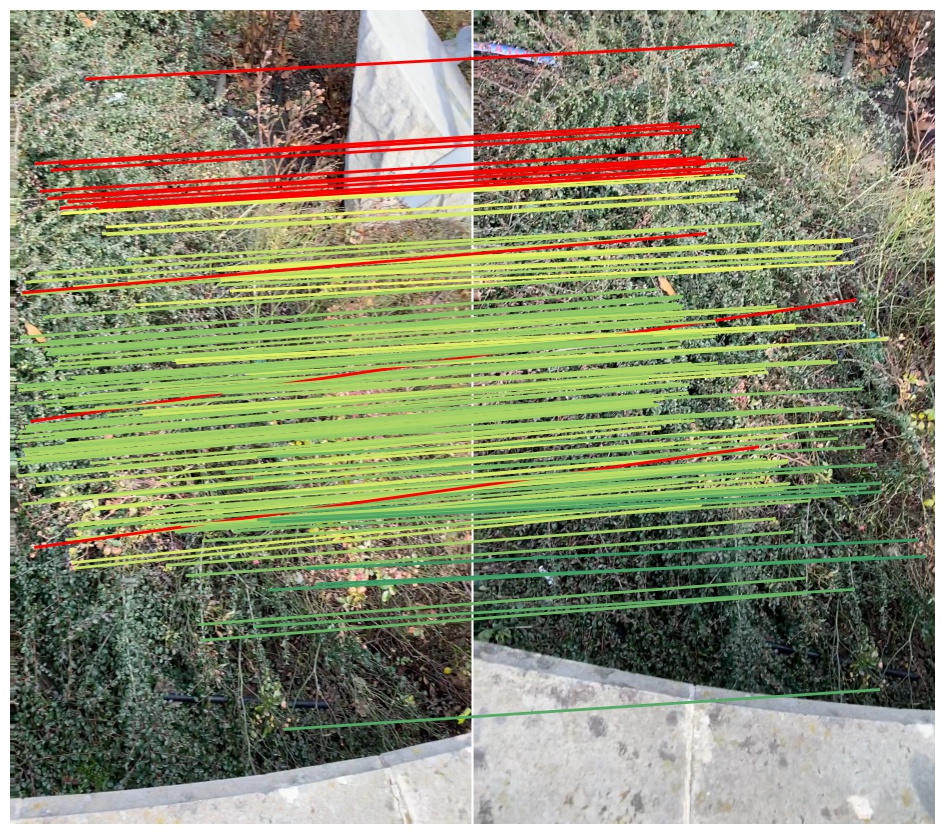

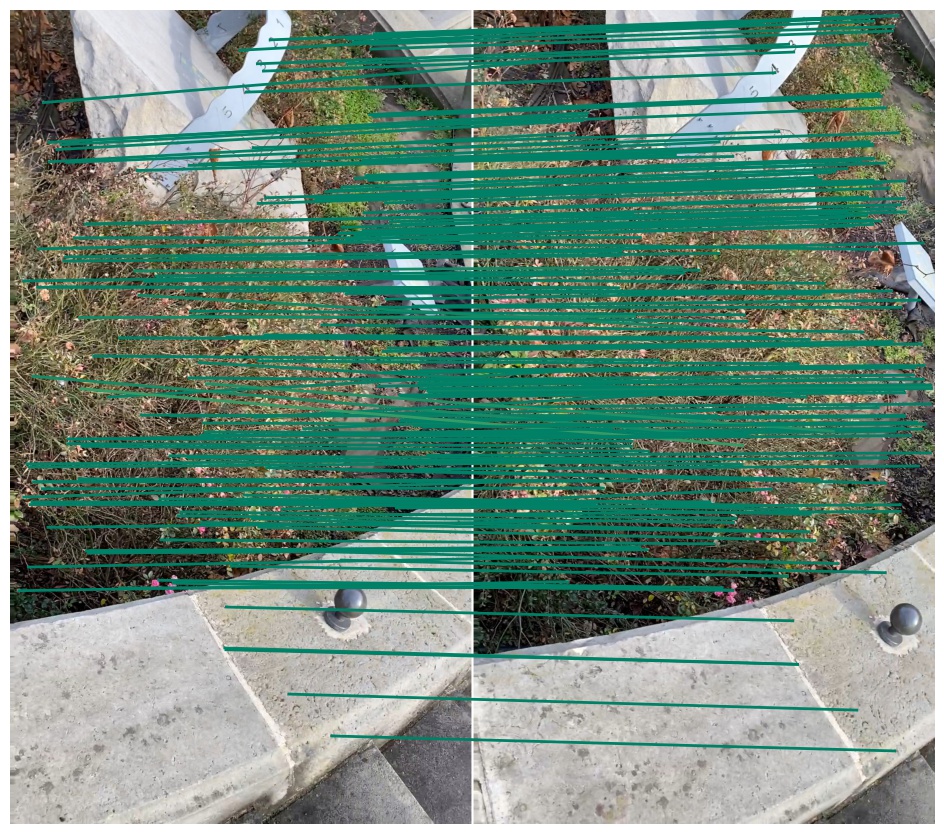

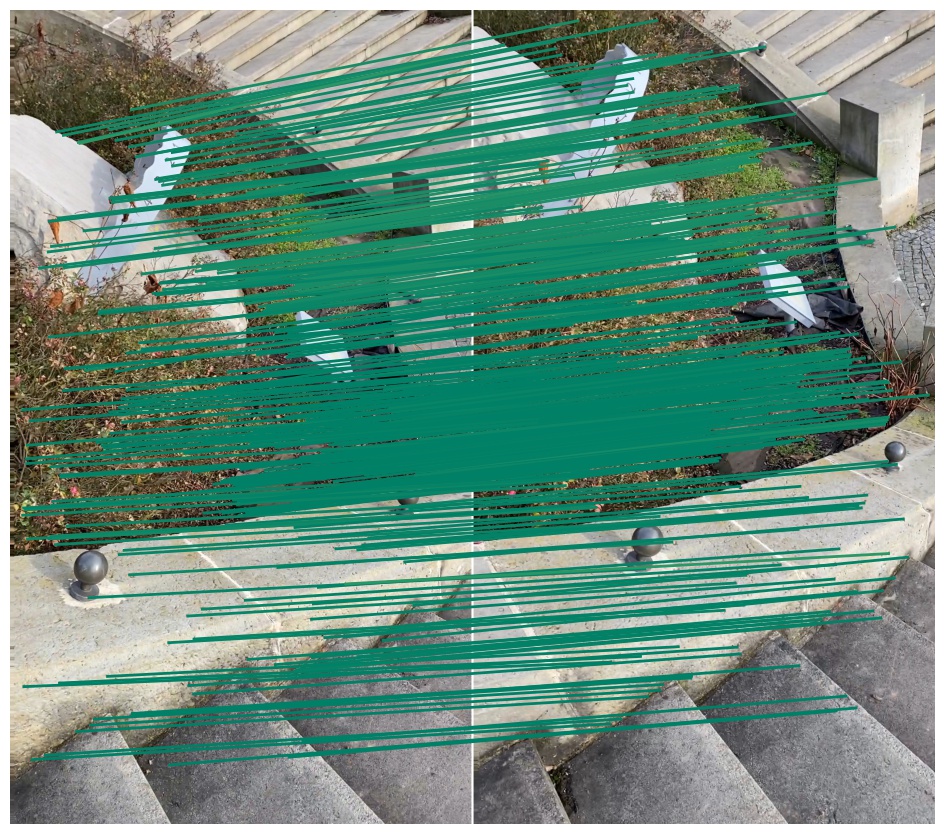

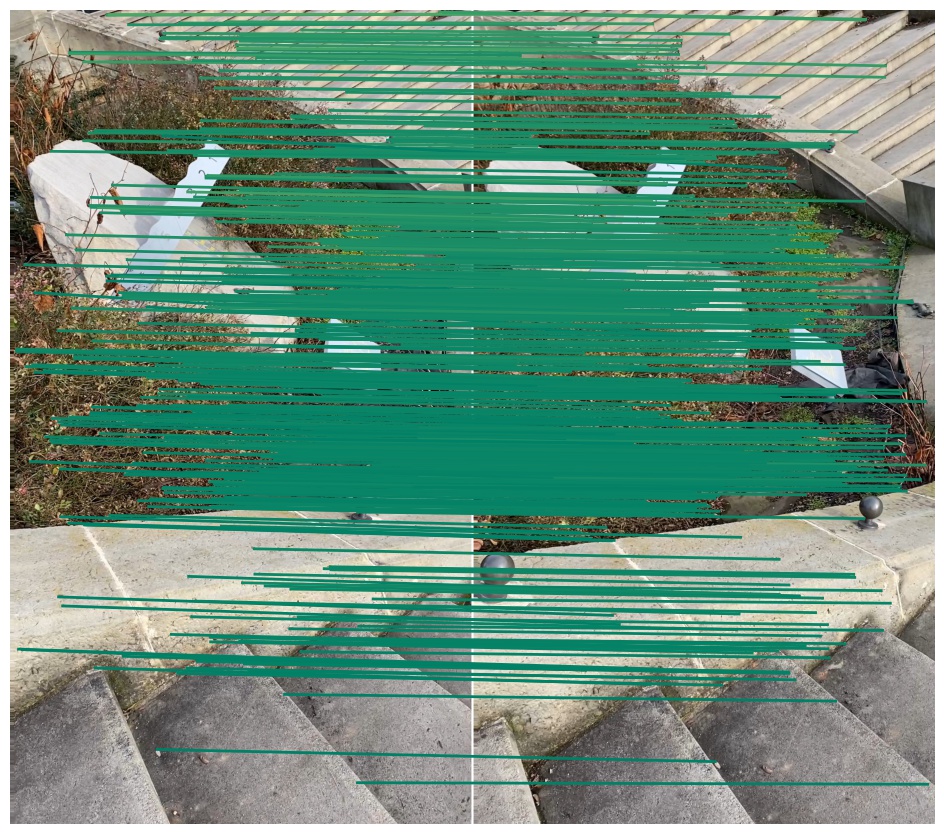

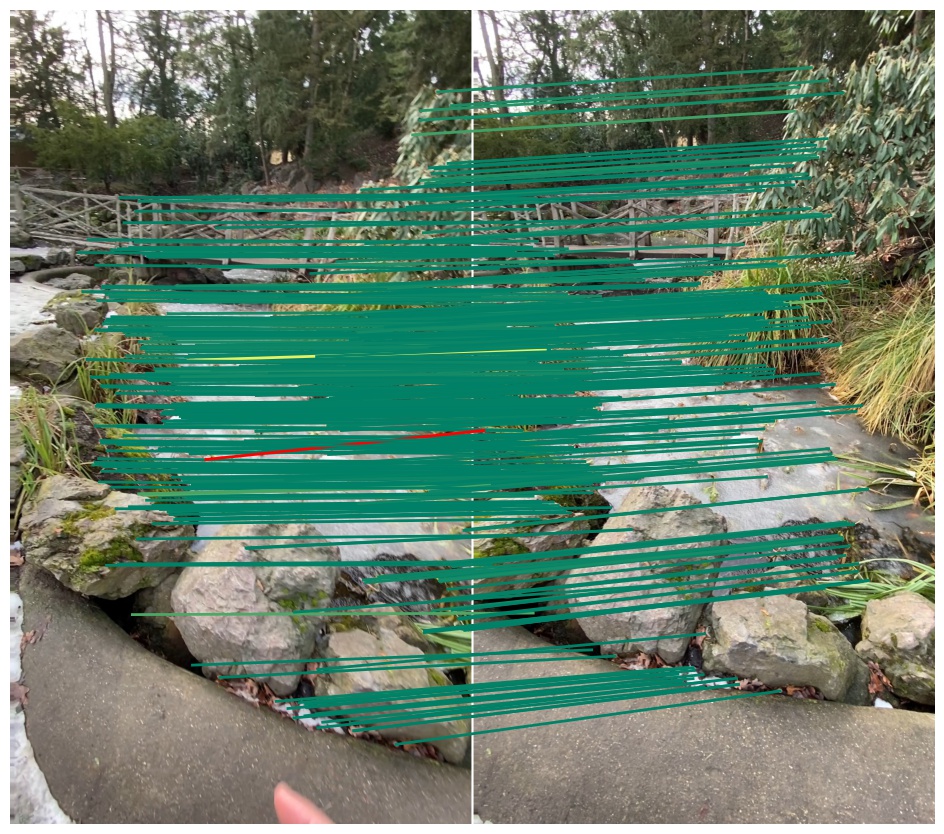

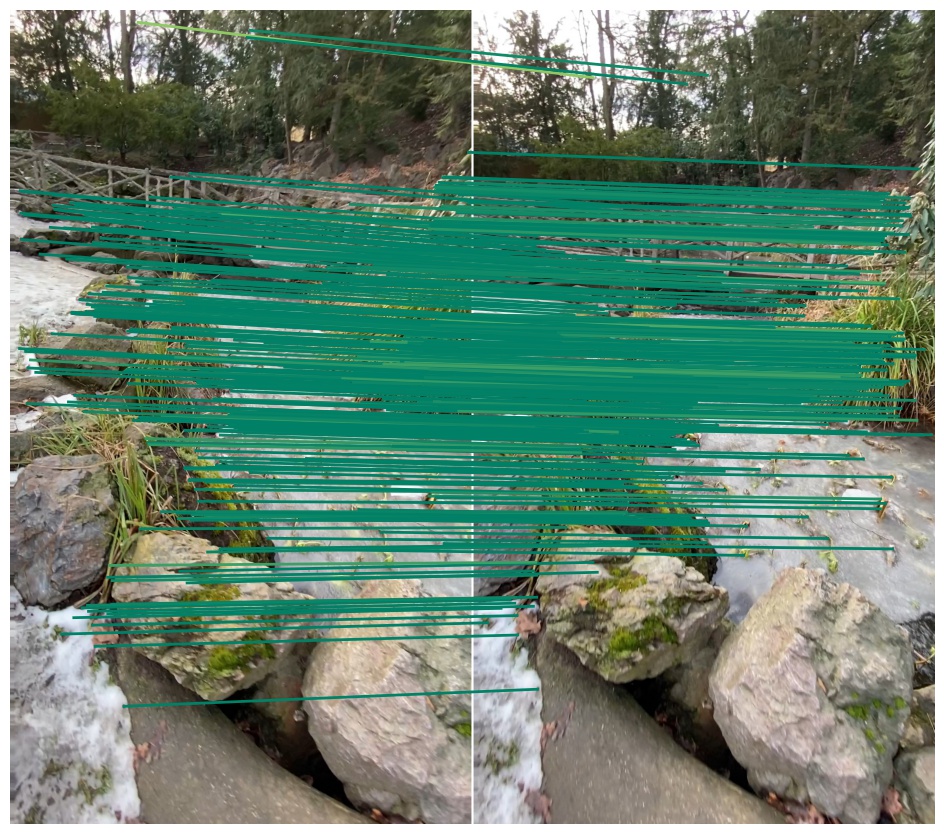

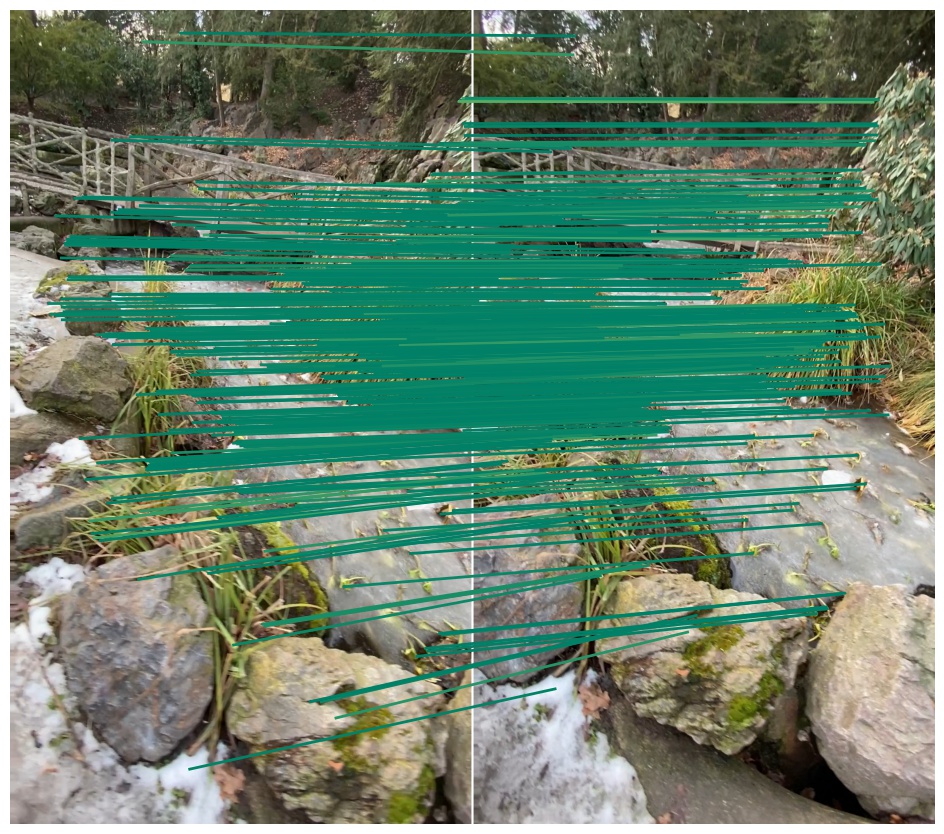

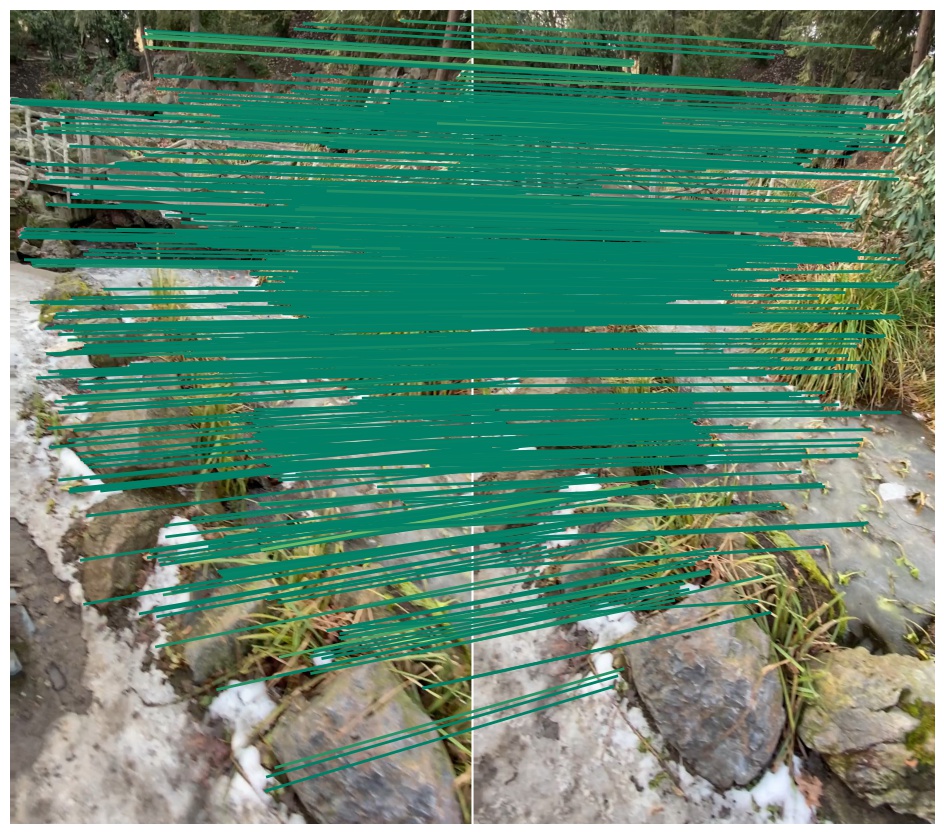

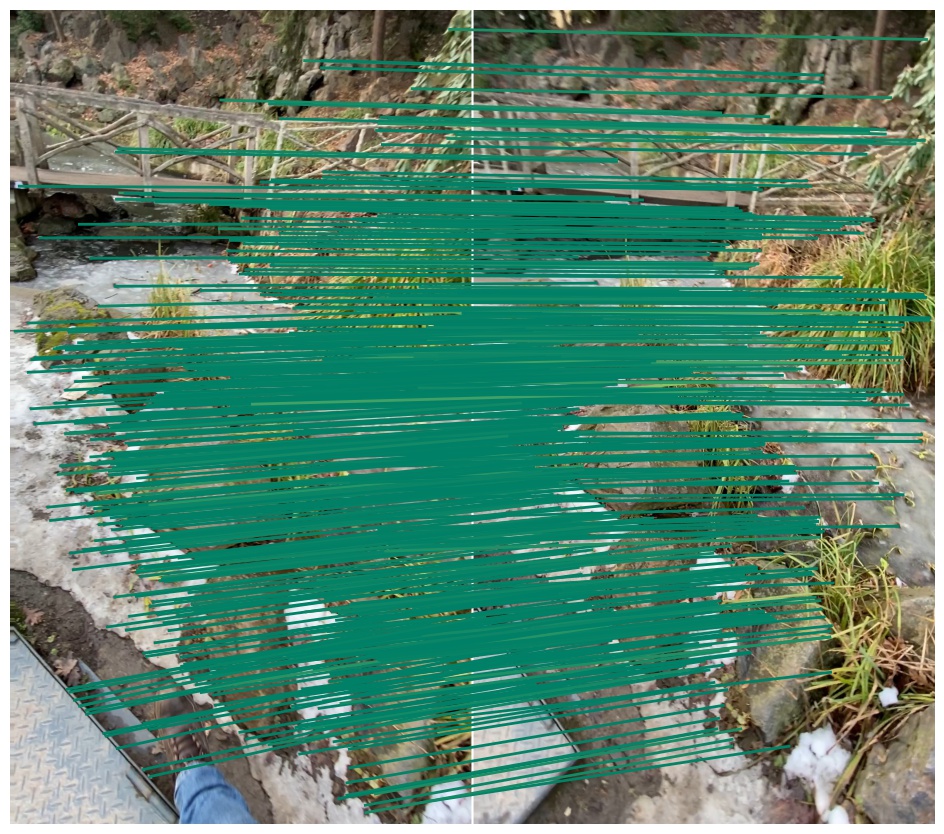

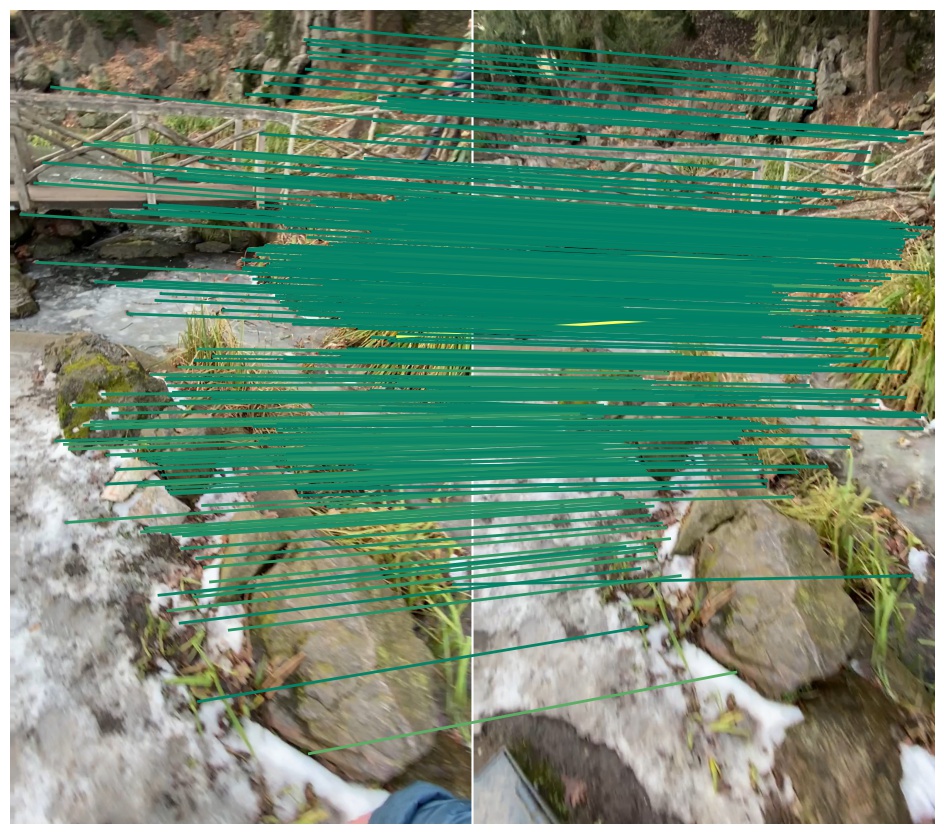

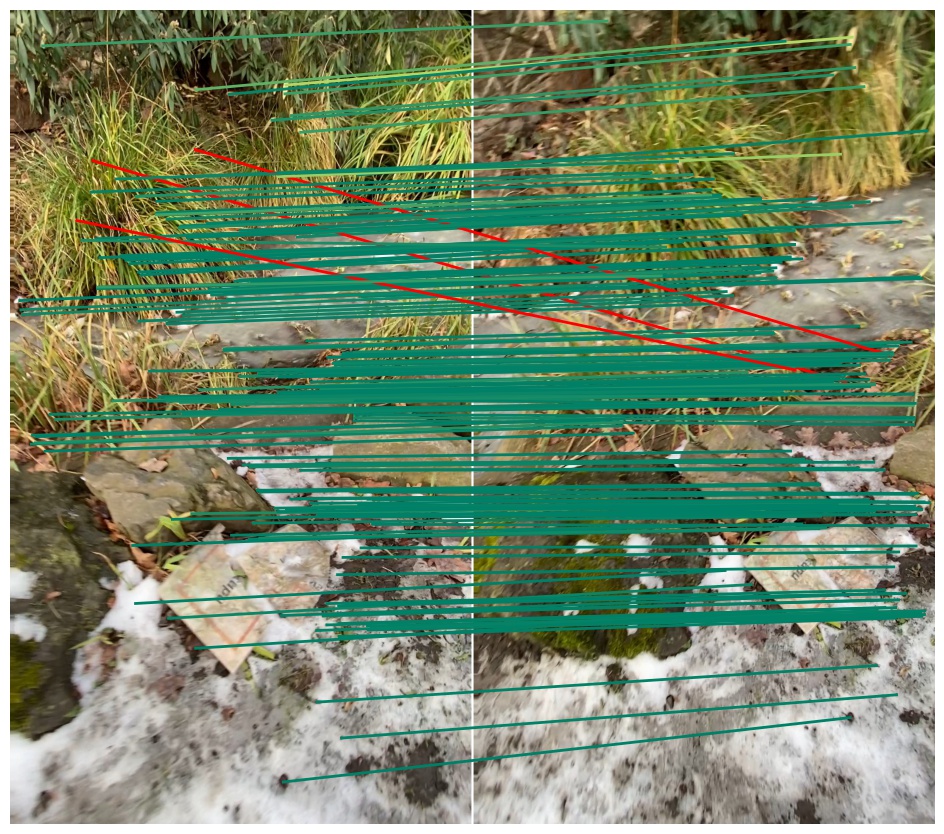

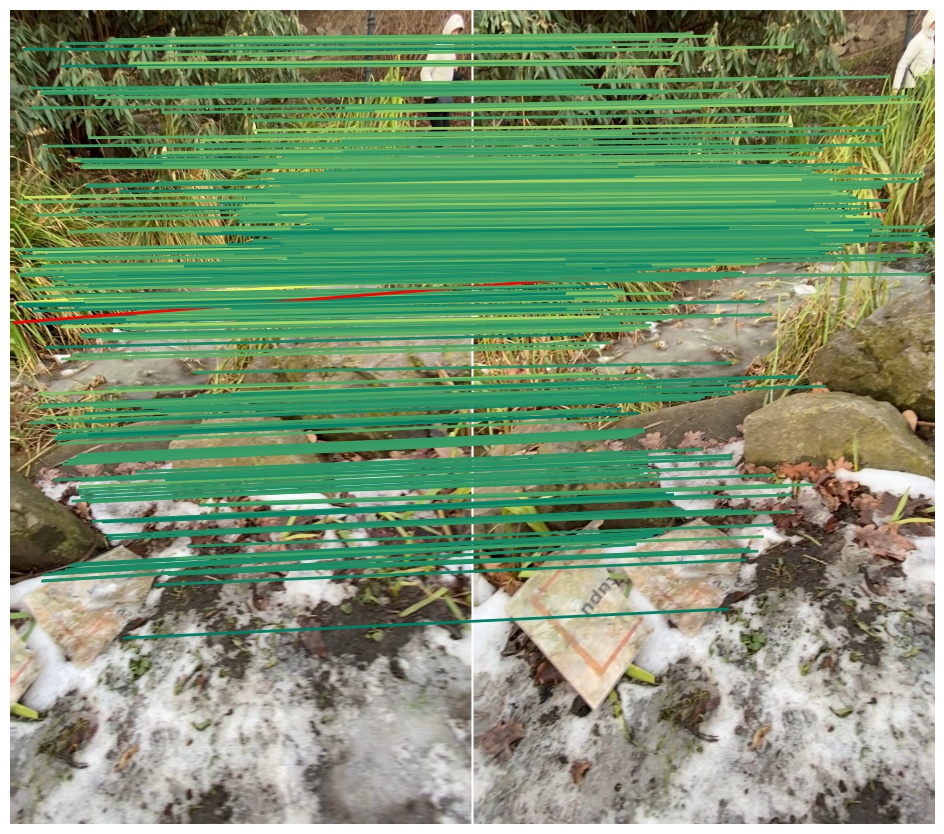

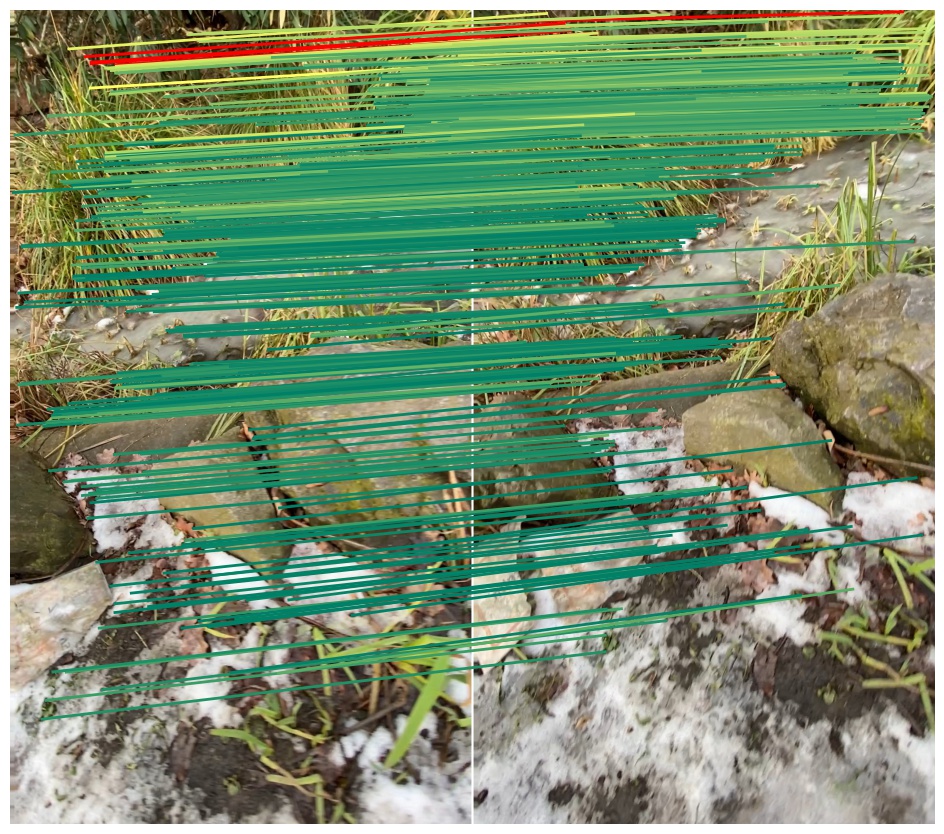

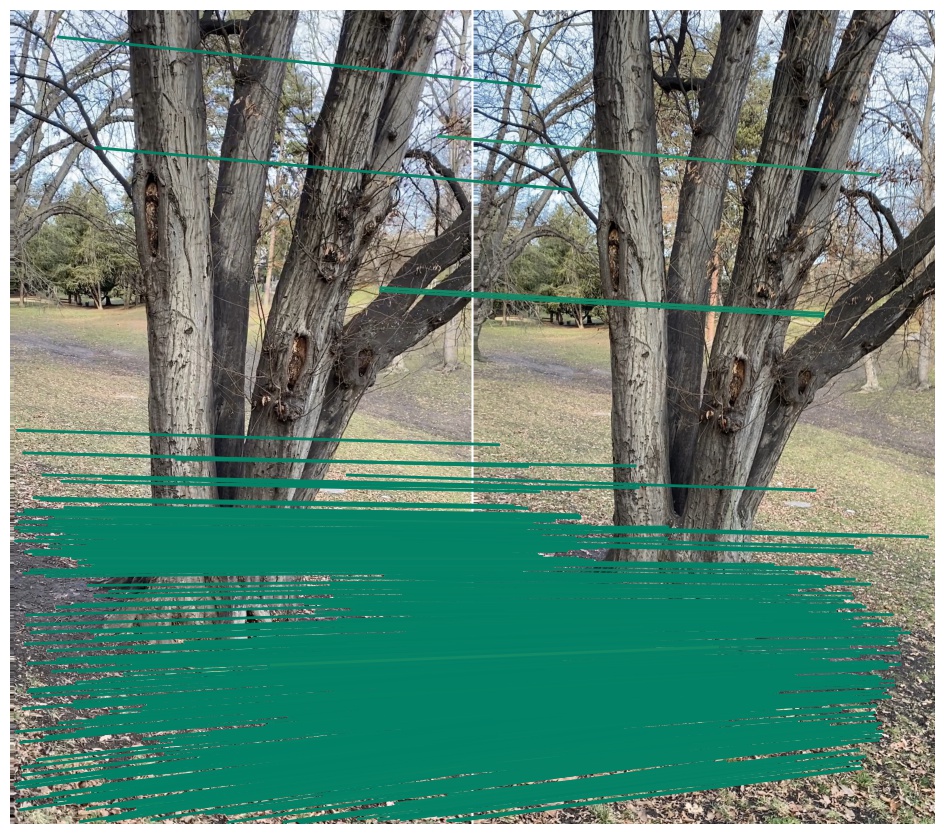

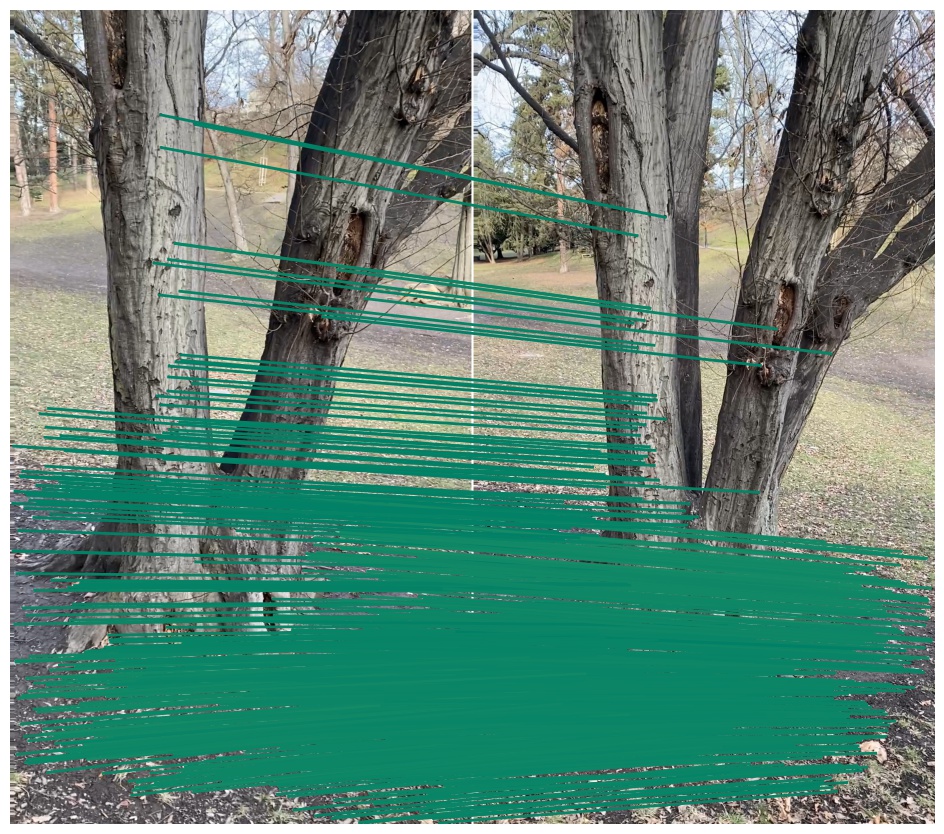

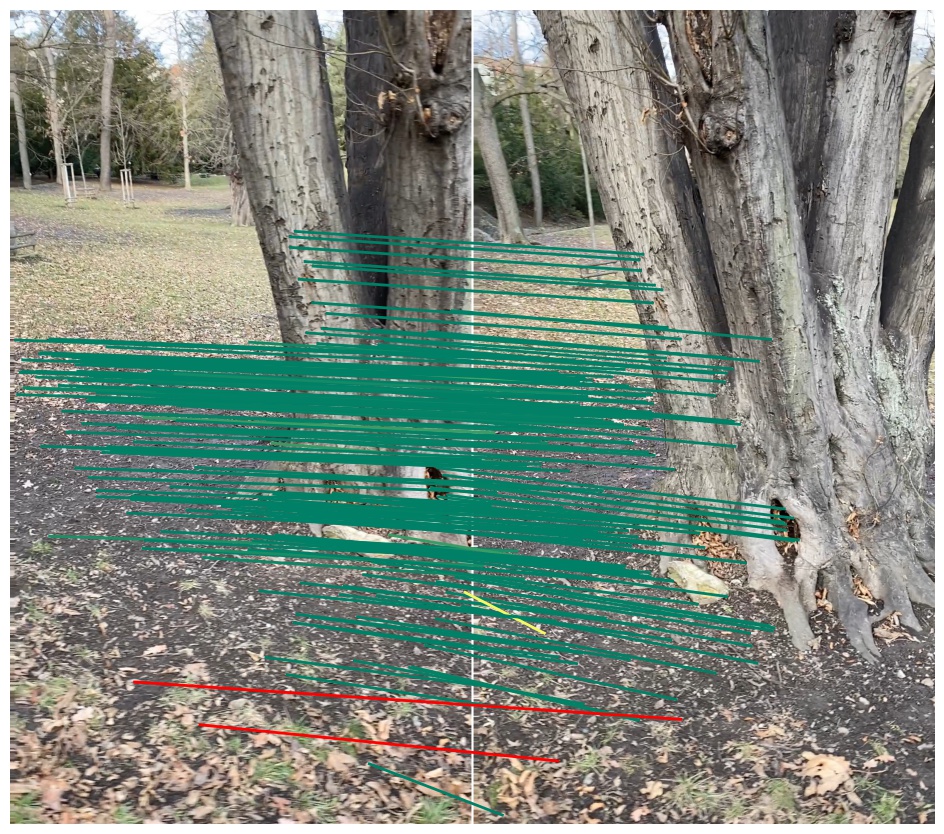

We show the inliers that survive the robust estimation loop (i.e. RANSAC), or those supplied with the submission if using custom matches, and use the depth estimates to determine whether they are correct. We draw matches above a 5-pixel error threshold in red, and those below are color-coded by their error, from 0 (green) to 5 pixels (yellow). Matches for which we do not have depth estimates are drawn in blue. Please note that the depth maps are estimates and may contain errors.

— British Museum —

— Florence Cathedral Side —

— Lincoln Memorial Statue —

— London Bridge —

— Milan Cathedral —

— Mount Rushmore —

— Piazza San Marco —

— Sagrada Familia —

— Saint Paul's Cathedral —

Phototourism dataset / Multiview track

mAA at 10 degrees: N/A (±N/A over N/A run(s) / ±N/A over 9 scenes)

Rank (per category): (of 86)

No results for this dataset.

Prague Parks dataset / Stereo track

mAA at 10 degrees: 0.70301 (±0.00000 over 1 run(s) / ±0.09524 over 3 scenes)

Rank (per category): 41 (of 87)

| Scene | Features | Matches (raw) |

Matches (final) |

Rep. @ 3 px. | MS @ 3 px. | mAA(5o) | mAA(10o) |

| Lizard | 2048.0 | 2048.0 | 120.2 | 0.075 Rank: 1/87 |

0.012 Rank: 18/87 |

0.43659 (±0.00000) Rank: 47/87 |

0.57622 (±0.00000) Rank: 46/87 |

| Pond | 2048.0 | 2048.0 | 82.9 | 0.116 Rank: 2/87 |

0.095 Rank: 2/87 |

0.58919 (±0.00000) Rank: 21/87 |

0.72703 (±0.00000) Rank: 12/87 |

| Tree | 2048.0 | 2048.0 | 84.3 | 0.085 Rank: 2/87 |

0.058 Rank: 2/87 |

0.69231 (±0.00000) Rank: 23/87 |

0.80577 (±0.00000) Rank: 26/87 |

| Avg | 2048.0 | 2048.0 | 95.8 | 0.092 Rank: 2/87 |

0.055 Rank: 2/87 |

0.57269 (±0.00000) Rank: 41/87 |

0.70301 (±0.00000) Rank: 41/87 |

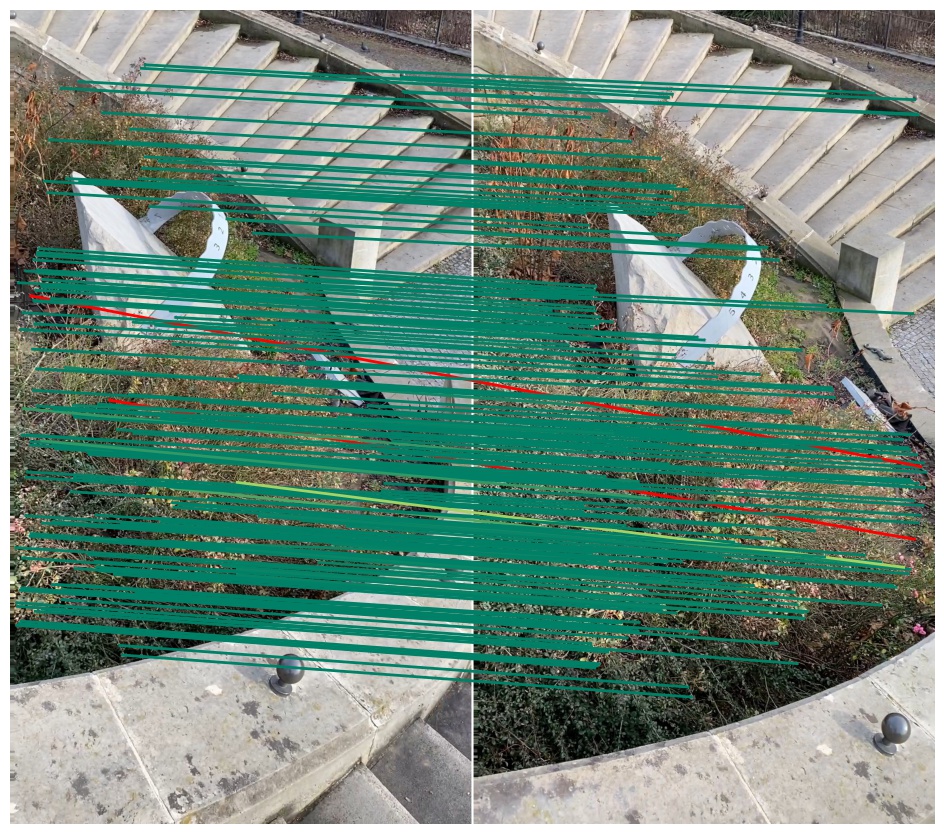

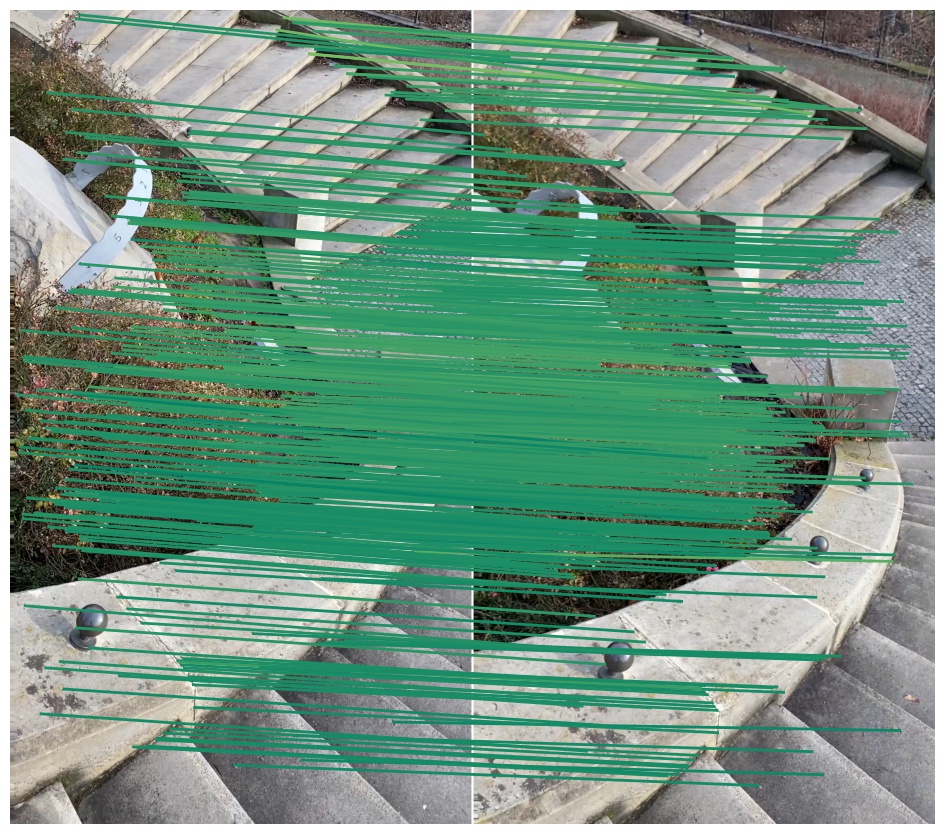

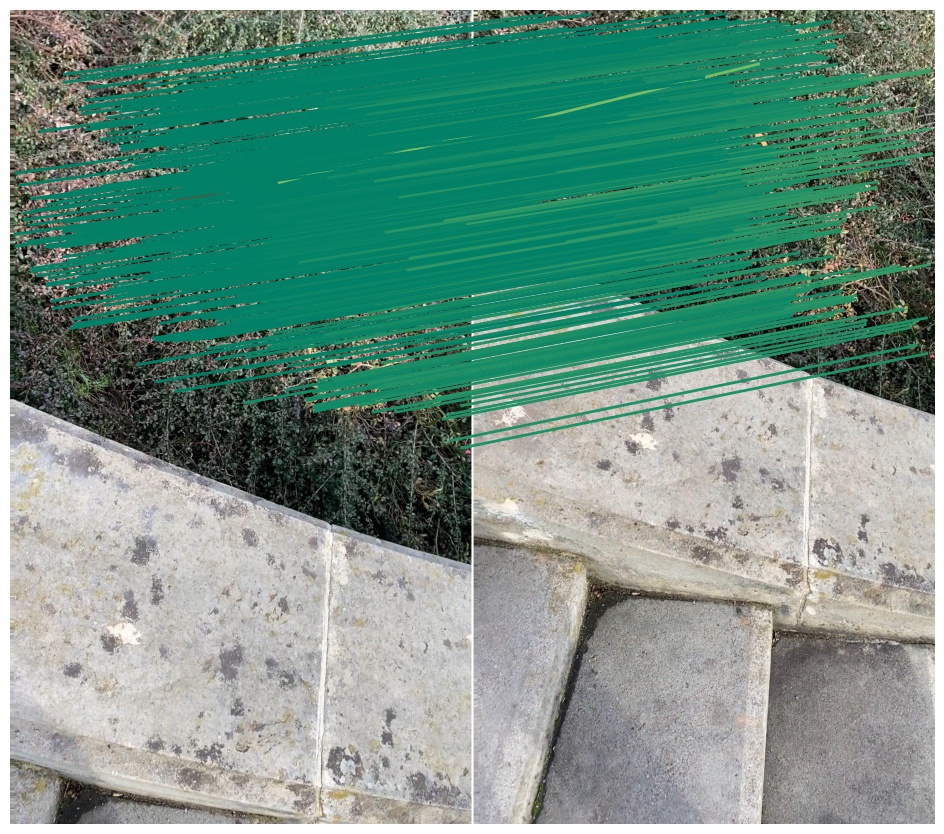

We show the inliers that survive the robust estimation loop (i.e. RANSAC), or those supplied with the submission if using custom matches, and use the depth estimates to determine whether they are correct. We draw matches above a 5-pixel error threshold in red, and those below are color-coded by their error, from 0 (green) to 5 pixels (yellow). Matches for which we do not have depth estimates are drawn in blue. Please note that the depth maps are estimates and may contain errors.

— Lizard —

— Pond —

— Tree —

Prague Parks dataset / Multiview track

mAA at 10 degrees: N/A (±N/A over N/A run(s) / ±N/A over 3 scenes)

Rank (per category): (of 86)

No results for this dataset.

Google Urban dataset / Stereo track

mAA at 10 degrees: N/A (±N/A over N/A run(s) / ±N/A over 17 scenes)

Rank (per category): (of 86)

No results for this dataset.

Google Urban dataset / Multiview track

mAA at 10 degrees: N/A (±N/A over N/A run(s) / ±N/A over 17 scenes)

Rank (per category): (of 86)

No results for this dataset.