News and Events

- Paper published at UMUAI on analysing eye-tracking patterns within narrative visualizations. Read the paper

- Won the Best Paper Award at the IJCAI 2019 workshop on Humanizing AI, about user confusion detection with RNNs. Read the paper.

Human-AI Interaction Research at UBC

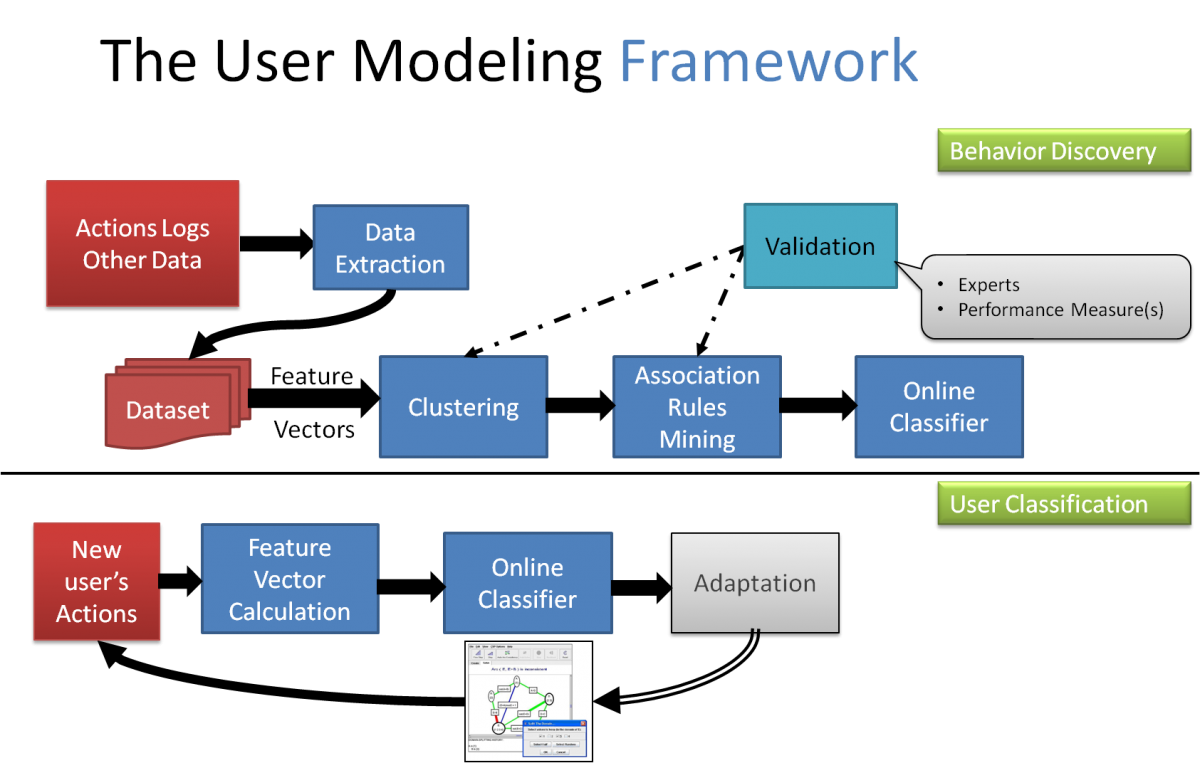

We aim to create AI-driven, human-centred interactive systems capable of both performing useful tasks as well as being well accepted by their users. A key aspect of this endeavour is enabling AI systems to predict and monitor relevant user properties (e.g., states, skills, preferences, needs) and personalize the interaction accordingly, in a manner that maximizes both task performance as well user satisfaction, abiding to principles of transparency, interpretability, predictability and user-control. Our long term vision is to create ethical AI systems that humans can trust and collaborate with.

We focus on the following research areas in particular (but not limited to):

User-Adaptive Visualization

The goal of this project is to design information visualization systems that can adapt to the specific needs of each individual viewer. Toward this goal, we are exploring data sources that could help detect these needs in real-time, including cognitive measures that impact perceptual abilities, interaction logs, as well as data from eye-tracking and physiological sensors. We are also investigating how to provide usable and non-intrusive user-adaptive interventions such as suggesting an alternative visualization if the current one seems to be unsuitable for the viewer, and/or providing interactive help on the current visualization (e.g, drawing attention to specific areas of interest). See our demos here.

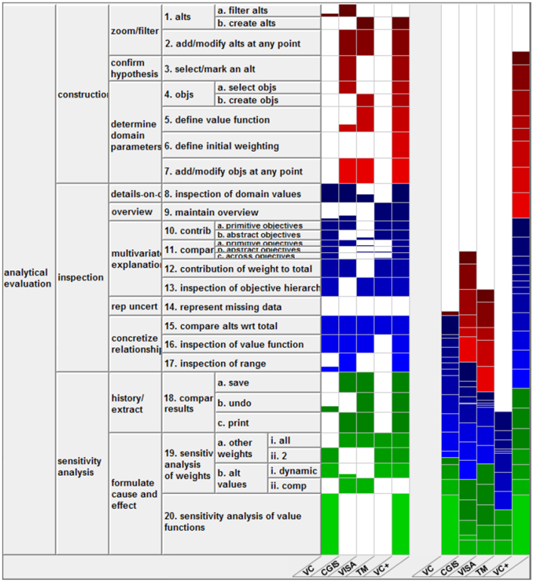

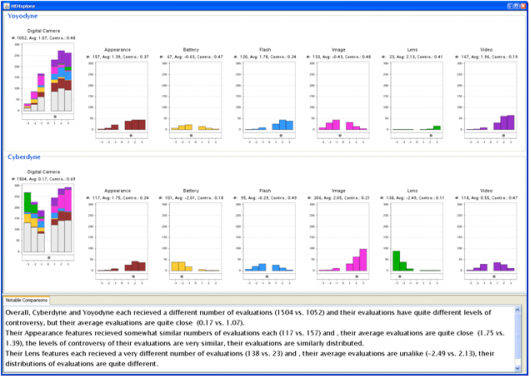

Interfaces for Decision Support (Preferential Choice)

Many critical decisions for individuals and organizations are often framed as preferential choices: the process of selecting the best option out of a set of alternatives. We are investigating interactive visualization techniques to support preferential choice. The design of our interfaces is grounded in detailed task models. In testing our interfaces, we typically measure both task performance and insights. See our demo for ValueCharts, one of our interface for decision making support.

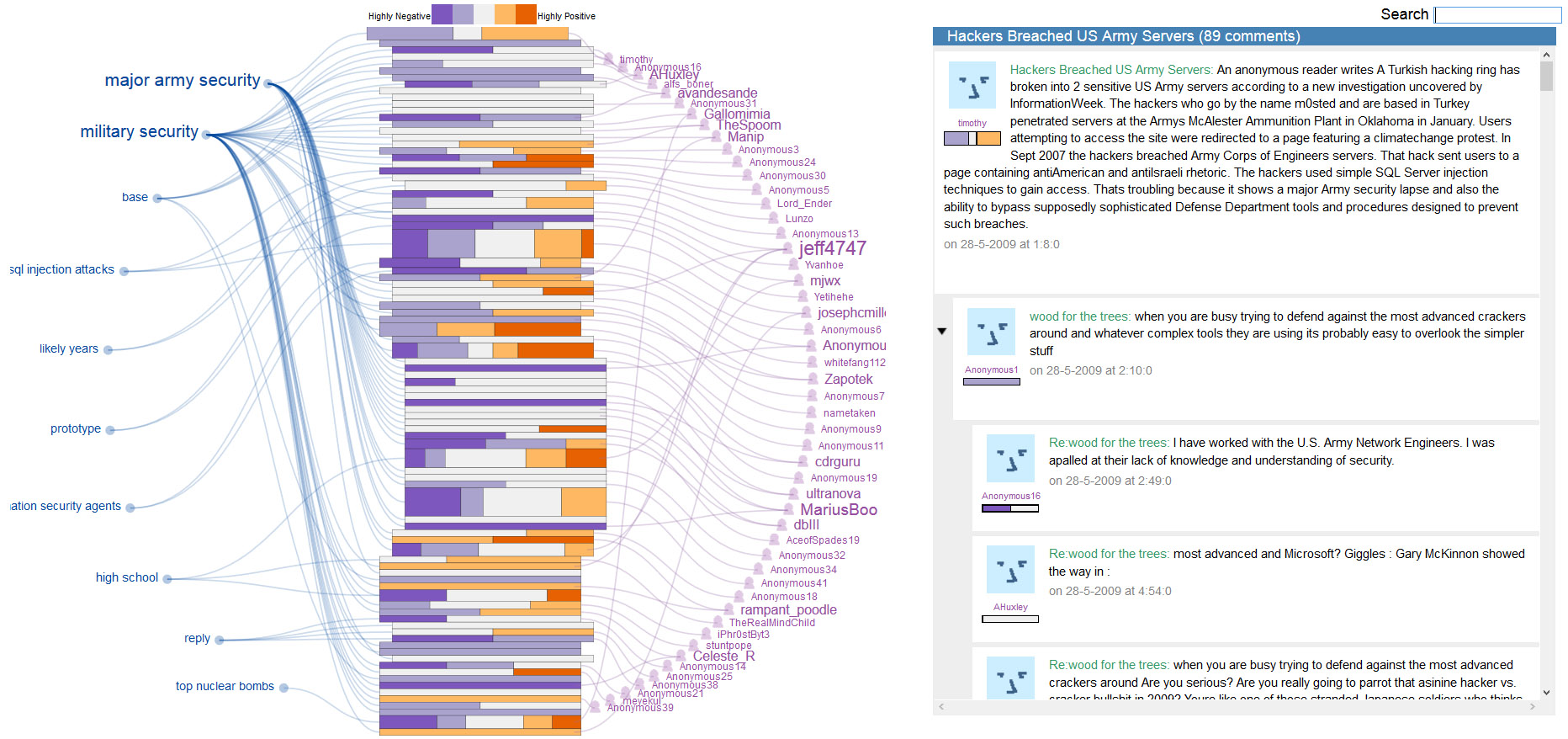

Multimedia Interactive Visualizations for Opinion Mining

In many decision-making scenarios, ranging from choosing what camera to buy, to voting in an election, people can benefit from knowing what other people's opinions are. As more and more documents expressing opinions are posted on the Web, extracting, summarizing and visualizing these useful resources becomes a critical task for many organizations and individuals.

Adaptivity in Unstructured and Open-ended Interfaces

We proposed a framework to model user's learning in Interactive Simulations (IS) thanks to eye tracking data. Our goal is to use user models that can trigger adaptive support for students who do not learn well with ISs, caused by the often unstructured and open-ended nature of these environments.