Using Deep Reinforcement Learning

Transactions on Graphics (Proc. ACM SIGGRAPH 2016) (to appear)

Xue Bin Peng Glen Berseth Michiel van de Panne

University of British Columbia

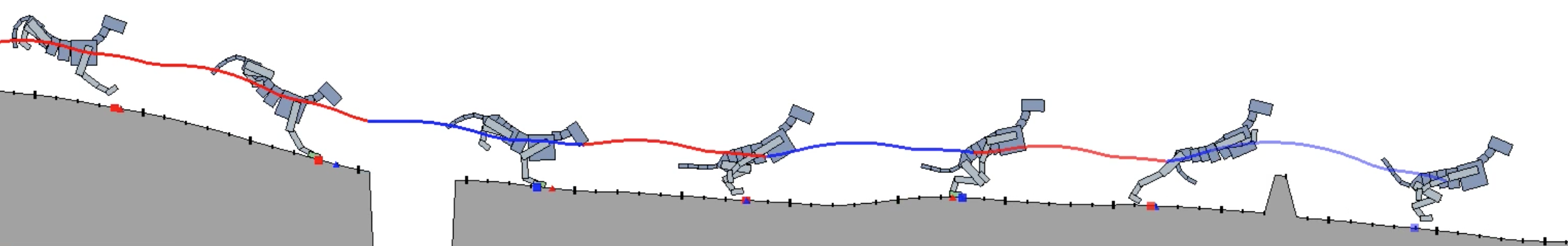

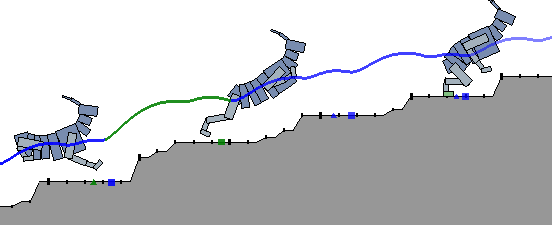

Reinforcement learning offers a promising methodology for developing skills for simulated characters, but typically requires working with sparse hand-crafted features. Building on recent progress in deep reinforcement learning (DeepRL), we introduce a mixture of actor-critic experts (MACE) approach that learns terrain-adaptive dynamic locomotion skills using high-dimensional state and terrain descriptions as input, and parameterized leaps or steps as output actions. MACE learns more quickly than a single actor-critic approach and results in actor-critic experts that exhibit specialization. Additional elements of our solution that contribute towards efficient learning include Boltzmann exploration and the use of initial actor biases to encourage specialization. Results are demonstrated for multiple planar characters and terrain classes.

@article{2016-TOG-deepRL,

title={Terrain-Adaptive Locomotion Skills Using Deep Reinforcement Learning},

author={Xue Bin Peng and Glen Berseth and Michiel van de Panne},

journal = {ACM Transactions on Graphics (Proc. SIGGRAPH 2016)},

volume = 35,

number = 4,

article = 81,

year={2016}

}